Better Models Will Absorb Half of What You Build Around AI. The Rest Will Matter More Than Ever.

The major harness engineering posts of 2026 converge on the same message: simplify. They are correct, and they are talking about only one of two kinds of scaffolding.

The Consensus (and Why It's Right)

Anthropic stripped sprint decomposition from their latest harness engineering guide. When Opus 4.6 got good enough to plan its own work, the task-splitting scaffolding became dead weight. OpenAI shipped a million lines of code with zero human-authored lines, powered by harness design rather than model improvements. Phil Schmid's agent harness guide advises building systems where you can "rip out the 'smart' logic [you] wrote yesterday" as models absorb capabilities that once required hand-coded pipelines.

The major harness engineering posts of 2026 converge on the same message: simplify. For code, this advice lands. LangChain's deep agents work showed that scaffold changes alone lifted their coding agent 13.7 points on Terminal Bench 2.0, and Epoch AI's SWE-bench analysis showed similar 11-15 point swings from scaffold changes without touching the underlying model. Scaffolding decisions move the needle as much as model selection. The question is which direction to move them.

But consensus tends to generalize from whatever domain produced it. And this consensus was produced almost entirely by people building coding agents. The ones writing the harness engineering posts, sharing the benchmarks, presenting at conferences are overwhelmingly people whose outputs have test suites. The advice is real, but it's worth asking whether it transfers.

What's Your Scaffolding Coupled To?

The discourse has organized itself around a single axis: how much scaffolding? More or less, thick or thin, complex or minimal, as if scaffold quantity were a dial you turn.

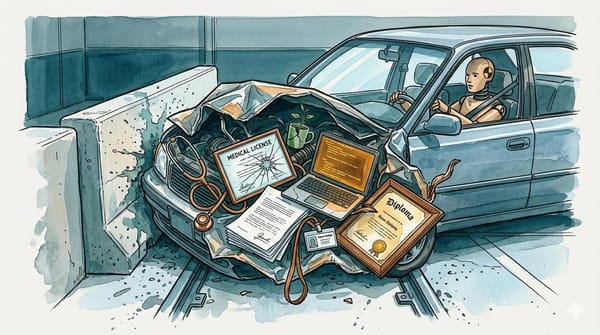

That's like asking "how much medication?" without knowing the diagnosis.

The dial metaphor assumes all scaffolding is the same kind of thing, varying only in amount, and it isn't. Some scaffolding compensates for what the model can't do yet. Some scaffolding solves problems the model architecturally cannot solve, no matter how capable it becomes. These two categories have different shelf lives, and conflating them produces advice that's right about one and wrong about the other.

Fred Brooks identified the same split in 1986: "No Silver Bullet" distinguished accidental complexity (artifacts of current tools) from essential complexity (inherent to the problem domain). Model-coupled scaffolding maps to accidental complexity. Problem-coupled scaffolding maps to essential complexity. The application to AI scaffolding is new; the underlying distinction is not.

The better question: what is your scaffolding coupled to?

These categories aren't always clean. A capability can be problem-coupled at the category level but model-coupled at the implementation level. Fact-checking is a permanent need; verifying claims against source material won't vanish with better models. But the specific implementation of how you fact-check changes with every model generation: the prompt engineering, the number of verification passes, the threshold logic. The distinction is about what the scaffolding is coupled to, not about filing every component into one box. What matters is recognizing the difference so you invest appropriately in each layer.

Model-Coupled Scaffolding

Model-coupled scaffolding compensates for a specific capability gap in the current model generation. It works until the model absorbs the capability, then it becomes drag.

Anthropic's sprint decomposition is the cleanest example. Earlier models couldn't maintain coherent plans across long tasks, so the harness broke work into sprints with explicit handoffs. Opus 4.6 plans its own work, and the scaffolding went from necessary to counterproductive in a single model generation.

Vector search is heading the same direction. PageIndex achieved 98.7% accuracy on FinanceBench through tree-search reasoning over document structure, compared to vector RAG baselines in the 30-50% range. Instead of swapping one retrieval pipeline for another, the model skipped retrieval altogether and reasoned its way through the documents, navigating cross-references and drilling into relevant sections the way an expert would, rather than hoping a cosine similarity score surfaces the right chunk. Claude Code and Cursor made the same move for codebase navigation, abandoning vector-based code search in favor of letting the model actively explore files. The indexing pipelines, the embedding models, the retrieval tuning: all of it was model-coupled scaffolding that became unnecessary once the model could read code directly.

Chain-of-thought prompting might be the most widespread example. Telling a model to "think step by step" was a real capability unlock in 2023. Models with native reasoning don't need the instruction. It's still in millions of system prompts, accomplishing nothing.

Call this the lag artifact. Model-coupled scaffolding accumulates fast and gets removed slowly. When a model fails at something, teams add scaffolding immediately. When the next model generation absorbs that capability, nobody audits what's now unnecessary. The scaffolding persists through inertia, and over time, the accumulated lag artifacts become a real performance tax. Anthropic's harness post is telling people to pay down that debt.

Problem-Coupled Scaffolding

Problem-coupled scaffolding doesn't compensate for model weakness. It addresses something structurally inherent to the problem, independent of how capable the model becomes. And unlike the model-coupled variety, it tends to get more valuable as models improve, not less.

External memory is the simplest case. Models are stateless by architectural design. When our system needs to know that we covered a story two weeks ago, or that a prediction we made in January was proven wrong, the model can't retrieve that on its own. Some vendors have added persistent memory features (Gemini's long-term memory, ChatGPT's memory), but these remain limited in scope and externally managed rather than architecturally native. You can double the context window, triple it, give the model a million tokens. It still forgets between sessions unless something outside the model stores and retrieves state. External memory files, databases, grep-backed search over prior output: that's infrastructure solving a problem that lives in the architecture itself, not in the model's capability level.

Fact-checking is less obvious but structurally similar. SDPO from ETH Zurich demonstrated that rich feedback signals during self-improvement outperform simple binary correctness labels. The system doesn't just learn whether it was right; it learns why it was wrong, which claims were unsupported, which sources contradicted each other. This is problem-coupled scaffolding that gets more valuable as models improve.

DeepMind's Aletheia math agent found the same dynamic from the opposite direction. Aletheia's key finding was that decoupling a model's final output from its intermediate reasoning, then running a separate verification pass, catches high-confidence errors that survive without independent verification. The generator-verifier separation was necessary because the generation process itself couldn't reliably flag its own mistakes. Our inference from the architecture is that this dynamic intensifies with capability: a more capable generator produces more convincing outputs, making independent verification more necessary rather than less. But that's our extrapolation from the design, not the paper's direct claim. The verifier is problem-coupled scaffolding regardless.

Our own internal harness evaluation shows the fact-checking dimension directly. In the same internal evaluation, harnessed models hit 92% F1 on fact-checking accuracy versus 54% for bare models. The harness nearly doubled factual reliability. Stripping that scaffolding would halve our accuracy in the one dimension our readers actually depend on.

Our own council review system runs 16 evaluation seats, each added because a specific failure slipped past everything else. The freshness seat exists because we published a rehash. The pacing seat exists because structural problems went undetected. These aren't assumptions about model weakness. They're scar tissue from real failures in a domain where no test suite exists to catch them automatically.

Publish gates and editorial judgment fall into this category too. There's no clean reward signal for "should we publish this?" No loss function captures audience trust, brand consistency, or the difference between a hot take and a harmful one. These are judgment calls that can't be compressed into a training objective, which means the scaffolding that makes them won't be absorbed by training.

Prompt screening and adversarial defense are structural for the same reason. Adversarial inputs exist independently of model intelligence. A frontier model that's better at following instructions is also better at following adversarial instructions. The attack surface grows with capability.

Regulatory-Coupled Scaffolding

A third coupling type operates independently of both model capability and problem structure. In healthcare, HIPAA audit trails exist because federal law mandates them, not because the model can't track its own decisions. In finance, SEC explainability requirements force systems to generate human-readable reasoning chains in specific formats, even when the model's native chain-of-thought is arguably better. In education, FERPA data handling governs how student information flows through the system regardless of what the problem or model demands.

Regulatory-coupled scaffolding can't be stripped when models improve because it isn't coupled to the model. It can't be reframed as a problem property because it's imposed from outside the technical system entirely. And it carries its own version of the lag artifact: in regulated industries, removing scaffolding triggers validation cycles, regulatory notifications, and documentation requirements that can take months even when the technical case for removal is clear. The cost of identifying lag artifacts is low. The cost of removing them, when a regulator mandates the process, can be enormous.

The Coupling Landscape

Beyond regulatory mandates, several other coupling types emerge in high-stakes domains that extend the framework past the original binary.

Liability-coupled scaffolding exists because someone needs to be accountable when things go wrong, regardless of accuracy. A legal AI that's 99.99% accurate at detecting privileged documents still needs privilege screening infrastructure, because the 0.01% miss can end careers. The scaffolding is driven by the consequences of failure, not the probability of failure.

Time-coupled scaffolding persists in finance because latency is the problem itself. Transaction monitoring thresholds, sequencing logic, real-time compliance checks all manage timing constraints that don't relax when models get smarter. A more capable model doesn't make the OFAC sanctions list update any slower or the regulatory reporting window any wider.

Equity-coupled scaffolding enforces value commitments that capable systems might otherwise optimize away. In education, monitoring outcome gaps by race, gender, socioeconomic status, and disability status is permanent infrastructure. It doesn't compensate for model weakness or solve a structural information problem. It enforces a fairness obligation that exists regardless of how accurate the model becomes, because the stakes involve vulnerable populations.

These categories don't replace the model-coupled and problem-coupled distinction. They show that "what is it coupled to?" has more than two answers, and getting the answer right determines whether your scaffolding decisions are investment or waste.

The Verifiability Wrinkle

The "simplify" consensus works for code because code has a test suite. You write a function, you run the tests, you know if it works. The feedback loop is tight and unambiguous. When the model can pass the tests without elaborate scaffolding, the scaffolding was model-coupled, and removing it is correct.

For domains without a test suite, the situation inverts. Editorial judgment, creative work, strategic decisions, medical reasoning: these don't have a pass/fail signal. The scaffolding is the verification mechanism. Stripping it doesn't simplify the system. It strips the only check you have.

"No test suite" isn't quite one thing, though. Medicine and education do have feedback, it just arrives late: treatment outcomes take weeks, learning outcomes take months. Editorial judgment is different. There's no clean feedback signal at any timescale, delayed or otherwise. The distinction matters for design. When feedback is delayed, scaffolding bridges the gap between action and outcome. When feedback is absent, scaffolding is the only evaluation that exists. Both create coupling that persists regardless of model capability, but they call for different engineering.

This follows a pattern older than AI. When programming languages improved from assembly to C to Python, the infrastructure around code didn't shrink. Unit testing frameworks, CI/CD pipelines, static analyzers, code review processes, dependency audits: most of them emerged after the underlying tools got better. Better tools raise the stakes and widen the deployment surface, which creates demand for more process, not less. AI scaffolding follows the same law. As models get better at generating plausible text, the failure mode shifts from "obviously wrong" to "subtly wrong," and subtle errors need more sophisticated detection.

Anthropic's own harness post acknowledges this indirectly. Their design criteria for creative work include quality, originality, craft, and functionality, and their evaluator remains essential for these assessments. The evaluator is judgment scaffolding. It persists precisely because there's no automated way to replace it.

This creates an uncomfortable asymmetry. The domains producing the most confident "simplify" advice are the domains where simplification is easiest to validate, because they have tests. The domains where simplification might be most dangerous are the domains least likely to produce counter-evidence, because they lack tests. The consensus is built on survivorship bias in the evidence base.

The "Safety Under Scaffolding" paper measured this directly. Simply switching from multiple-choice to open-ended format shifted safety scores by 5-20 percentage points, and model-scaffold interactions spanned up to 35 percentage points in opposing directions. The scaffolding isn't cosmetic but load-bearing. And the more capable the model underneath, the higher the stakes when the scaffolding fails.

HyperAgents: The Agent Figures It Out

Meta's HyperAgents paper points toward where this distinction eventually goes. HyperAgents are agents that modify their own scaffolding at runtime. Not just their task-solving code, but the meta-level mechanisms that decide how to improve.

On coding benchmarks, HyperAgents achieved 3x improvement; on robotics tasks, 6x. The interesting finding isn't the performance numbers, though. It's what the agents built for themselves. Without being prompted, HyperAgents constructed their own memory systems and performance trackers. They added scaffolding they determined they needed and removed scaffolding that wasn't helping. Model-coupled scaffolding got stripped when the agent's own capability made it redundant. Problem-coupled scaffolding got built and maintained because the agent discovered it needed persistent state, needed verification, needed structured evaluation.

Regulated domains complicate this picture. An agent that rebuilds its own compliance checks at runtime is an audit finding, not a feature. Model risk management frameworks require documented validation before changing any model component, and self-modifying scaffolding doesn't fit neatly into governance structures designed for human-approved change management. The direction is promising, but the regulatory environment will shape how far self-modification can go and which domains it reaches first.

What this points toward is scaffolding that adapts, where the coupling question gets answered dynamically through trial and error against real performance signals. We're not there yet. HyperAgents operated in environments with clean performance metrics, and the extension to domains with ambiguous feedback remains open.

This connects to what we've been mapping in our Levels of Emergent Intelligence framework. The progression from tool-use to self-modification isn't just a capability ladder. Each level changes the relationship between the agent and its scaffolding, from consuming infrastructure to building it.

What to Couple To?

Before building any new scaffold, before stripping any existing one, ask: what is it coupled to?

We run a 16-seat council review, a prompt screener, external memory, and a fact-checking pipeline around a frontier model. We also stripped our style rules after our own evaluation showed they'd become drag. Both decisions were correct, because they answered different versions of the coupling question. One set of scaffolding was coupled to model limitations that no longer exist. The other is coupled to problems that don't go away when the model gets smarter.