Everyone Heard "AGI." Nobody Heard "0%."

Jensen Huang, prediction markets, and the most expensive word in AI.

On March 23, five prediction markets moved in lockstep. "AI smarter than smartest human by 2026" jumped from 15% to 32%. "AGI by 2027" did the same. "Multi-Domain AGI by October 2027," same again. "OpenAI announces AGI before 2027" and "Anthropic acquired before 2027" followed right behind. Five different markets, five different resolution criteria, one roughly uniform surge of about 17 percentage points.

The catalyst appears to have been a single sentence. Jensen Huang, sitting across from Lex Fridman for episode 494 of his podcast, was asked about the timeline for AI that could match human-level intelligence. Lex framed it specifically: "an AI system that's able to essentially do your job. So run, no start, grow and run a successful technology company that's worth more than a billion dollars."

Huang's answer: "I think it's now. I think we've achieved AGI."

Within hours, Bitget News reported that Polymarket's "OpenAI announces AGI before 2027" contract had climbed to 30%. The other four markets followed. The timing is suggestive: the markets were relatively flat before the podcast aired, then moved sharply after Huang's clip began circulating on social media. Billions of dollars in notional prediction market value shifted on the strength of six words from the CEO of the world's most valuable AI infrastructure company.

Except those six words weren't the whole sentence.

What He Actually Said

The full exchange is more interesting than the headline version. Huang didn't declare that AI had achieved general intelligence in any classical sense. He described a narrow scenario: an AI agent that could plausibly create a viral web app, briefly touch a billion-dollar valuation like the flash companies of the early internet era, and ride the wave for a few months. He imagined a digital influencer, a Tamagotchi-like social app, something that catches fire fast and burns out faster.

Then came the caveats that didn't make it into the prediction market feeds.

"Now the odds of 100,000 of those agents building Nvidia," Huang said, "0%."

He went further. "A lot of people use it for a couple of months and it kind of dies away."

This is the CEO of the company that sells the picks and shovels to every major AI lab on the planet, and his definition of "AGI" is an agent that might get lucky with a viral app before users lose interest. His definition of "not AGI" is building something complex and enduring. The markets priced in the headline. The interview said something much more modest.

The timing wasn't accidental. Huang's appearance landed during a week already saturated with AI acceleration signals. GTC had just wrapped, with Nvidia calling the AI agent ecosystem a "$35 trillion market". Elon Musk had announced Terafab, estimated at $20-25 billion. Samsung committed $73 billion to AI chip investment for 2026. OpenAI's valuation hit $840 billion after closing a $110 billion raise. The direction was obvious: AI is accelerating and the money is real.

Into that environment, Google DeepMind published a cognitive taxonomy identifying ten key cognitive abilities for general intelligence and launched a Kaggle hackathon to design AGI evaluations. The taxonomy made AGI feel measurable, and therefore imminent. The White House proposed a national AI legislative framework to centralize regulation and override state-level restrictions, removing perceived regulatory overhang. And on March 24, a federal court in San Francisco was set to hear Anthropic's challenge to the Pentagon's "supply chain risk" designation, a case that materialized after Anthropic refused a defense contract and the Pentagon responded by labeling the company a national security concern.

Every one of these stories nudged the same direction, and Huang's declaration was the match dropped on kindling already stacked high.

The Structural Problem

Prediction markets are good at certain things. Will a specific candidate win an election? Will a bill pass before a deadline? These questions have concrete resolution criteria: someone counts the votes, reads the legislative record, checks the final score. The answer is verifiable and the definition is not in dispute.

AGI is the opposite of that.

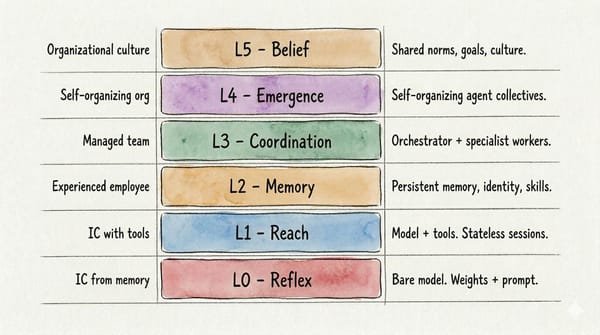

There is no shared definition. Huang's version is an agent that gets lucky with a viral app. The DeepMind cognitive taxonomy identifies ten cognitive abilities and multiple levels of competence. OpenAI's charter references "highly autonomous systems that outperform humans at most economically valuable work." Anthropic talks about AI that can "autonomously perform most knowledge work." Academic researchers have published dozens of competing frameworks ranging from the Turing Test to Chollet's ARC challenge to Morris et al.'s leveled AGI framework.

When a prediction market asks "Will AGI be achieved by 2027?" it is asking traders to bet on a word that means something different to every person placing a wager. The resolution criteria on these contracts are vague by necessity, because precision would require picking a definition, and picking a definition would immediately alienate everyone who uses a different one.

This creates a specific failure mode. A single influential person can move the market not by providing evidence that AGI has been achieved, but by using the word "AGI" in a confident sentence. The market doesn't distinguish between "the thing actually happened" and "someone credible said the word." It prices the signal, not the substance.

The roughly uniform shift across five different contracts is the tell. These markets are not formally linked. They don't share liquidity pools. But they're all coupled by design, because they all resolve based on subjective assessments of AI progress. When the CEO who sees every major lab's compute orders and capability roadmaps says the word "AGI," the prior updates for all of them simultaneously. The traders aren't evaluating five independent questions. They're evaluating one vibe.

And the uniformity itself is suspicious. If human traders were independently evaluating Huang's statement against each market's specific resolution criteria, you'd expect different magnitudes of response. "OpenAI announces AGI" should respond differently than "Anthropic acquired." The fact that they moved in lockstep suggests either algorithmic traders reacting to keyword extraction without parsing the caveats, or a common trader base that treats "AI prediction market" as a single asset class rather than five distinct questions.

140 Predictions and a Gate That Markets Don't Have

Future Shock maintains a prediction tracker with 140 active predictions spanning AI capability benchmarks, policy shifts, and market dynamics. Building that tracker exposed exactly the problem prediction markets are running into at scale.

Early in the process, predictions kept failing a basic test: could they actually be resolved? "AI will transform healthcare" sounds meaningful but has no finish line. "Radiologists will be replaced by AI" sounds specific but depends on what "replaced" means. Partial automation? Full elimination of the role? A 50% headcount reduction? Without a precise, falsifiable resolution criterion, a prediction is just a dressed-up opinion.

The solution was a preflight gate. Every new prediction runs through a validation process that checks whether the claim is genuinely predictive, whether it's already happening, and whether it can be cleanly resolved as true or false at a specific point in time. Predictions that fail the falsifiability test don't enter the database. The gate exists because without it, the tracker would fill up with vague directional bets masquerading as forecasts. (It's not a solved problem. Our tracker is small enough that we can manually vet every entry. Prediction markets handling thousands of contracts don't have that luxury.)

Prediction markets don't have that gate. Anyone can create a contract asking "Will AGI happen by 2027?" and traders will pile in, because the question feels meaningful even when it isn't operationally defined. The market generates a number that looks precise, but that precision is an illusion layered on definitional ambiguity.

The PDM (precondition density model) data in the tracker is worth comparing to the market action. Capability metrics show velocity plateauing even as market optimism surges. The benchmarks and the betting odds are diverging, and one of them is wrong.

Probability or Vibes?

If a single CEO redefining a term on a podcast can swing roughly 17 points across five correlated markets, what exactly are prediction markets measuring?

Maybe markets are efficiently incorporating new information. The most informed hardware supplier in the AI ecosystem offered a probability update, and traders responded rationally. That's the textbook answer.

But there's another possibility: markets are pricing narrative momentum, not probability. Huang said a magic word during a week when every other signal pointed the same direction, and the number went up because the number was already going up. The "information" being incorporated isn't Huang's technical assessment. It's the social proof of a high-status figure confirming what traders already wanted to believe.

Push further and prediction markets on ambiguously defined concepts start looking like sentiment trackers wearing the costume of quantitative rigor. They measure how excited the trading community feels about a topic, not whether a specific, verifiable thing will happen. When nobody agrees on the definition of what's being traded, the price reflects collective mood, not collective intelligence.

Jensen Huang probably knows more about the state of AI capability than almost anyone alive. He sees the training runs and the inference demand curves. He knows the roadmaps. When he says "I think we've achieved AGI," that means something. But what it means depends entirely on what "AGI" means, and his definition (an agent that might build a flash-in-the-pan viral app before users get bored) is a long way from the science fiction version that lives in most traders' heads.

The markets heard "AGI." They didn't hear "for a couple of months and it kind of dies away." That gap between what was said and what was priced is the real story here. Not whether AGI is here, but whether prediction markets can tell us when it arrives, given that nobody agrees on what "it" is.

Five markets moved on one word by approximately seventeen points, and nobody can say for certain what any of them are actually measuring.