Future Shock Squared

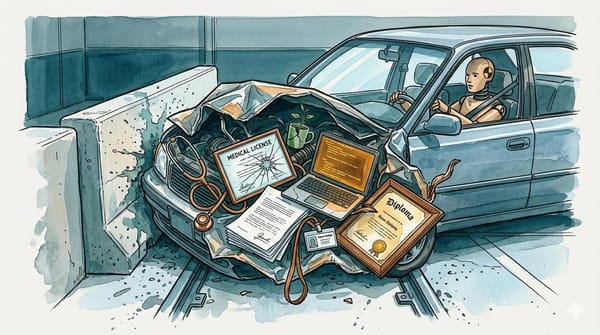

Toffler warned us about the disorientation of too much change too fast. He didn't warn us it would compound.

In 1970, Alvin Toffler wrote a book about the feeling of drowning in change. The vertigo when the world you understood last month no longer applies. He called it future shock: "the dizzying disorientation brought on by the premature arrival of the future." The book sold over six million copies and got translated into dozens of languages, which tells you he wasn't describing something obscure. He'd found the word for something everyone already felt.

Toffler's version of the problem was, by today's standards, almost quaint. Factories were automating. People were moving cities more often than their parents ever did. Products had shorter lifespans; you'd buy a toaster and it would be obsolete before it broke. He identified three forces driving the disorientation: transience (nothing lasts), novelty (everything's unfamiliar), and diversity (too many choices). The combination, he argued, was producing a new kind of psychological distress, a persistent cognitive overload from living in a world that refused to hold still.

He was right about all of it. He was also describing the kiddie pool.

The Exponent

Toffler's future shock was linear. The rate of change was faster than before, but it was still a rate you could theoretically adjust to. Give people time, education, supportive institutions, and they'd catch up. The future arrived early, but it arrived once, and you recalibrated. That's why we didn't call this piece Future Shock 2.0. Version 2.0 implies the same trajectory, just updated. What's happening now isn't the same train moving a little faster. It's a train that accelerates every time it moves, and the acceleration itself is speeding up. Future Shock squared.

Every few weeks through late 2025 and into 2026, something genuinely transformative dropped in the AI space. Actual, load-bearing shifts in what was possible. Autonomous agents orchestrating multi-step workflows without human intervention. Reasoning models solving problems their creators couldn't verify. Open-source systems closing the gap with frontier labs so fast that the concept of a competitive moat became a punchline. Any single one of these would have been the story of the year in 2023. In 2026, they're Tuesday.

Each breakthrough doesn't just add to your mental model of the world. It replaces it. You can't understand what autonomous agents mean until you've internalized what large language models can do. You can't grapple with multi-agent orchestration until agents-doing-tasks is already your baseline. The conceptual overhaul doesn't get to be a conceptual overhaul. It has to immediately become status quo so the next one has somewhere to land.

The prerequisite chains are the part that makes this exponential rather than merely fast. Why does Anthropic's agent protocol matter? To answer that, you need to understand AI agents. To get agents, you need tool use. To get tool use, you need to know how language models work. Each link depends on the last, and missing any one of them turns the next announcement into noise. Now multiply that by every major development landing every few weeks, each one building its own chain that intersects with all the others.

The original disorientation, compounded by its own acceleration.

The Exhaustion Nobody's Naming

Cal Newport argued in a late-2025 New Yorker piece that AI adoption remains narrow and incremental. He's got a data point: most people's daily routines look about the same as they did two years ago. What that metric misses is that the gap between the frontier and the median isn't evidence of stasis. It's the delay before the wave hits. He's measuring the caboose and concluding the train isn't moving.

What Newport's framing doesn't capture is the experience of someone genuinely trying to keep up.

You spend a weekend understanding a new capability. Actually sitting with it, working through the implications, reconfiguring your understanding of what's possible and what it means for the things you care about. By Monday, you've had a genuine realization that reshapes how you think about work or creativity or what it means to be good at something. The kind of realization that used to happen maybe once a year in tech, the kind you'd bring up at dinner parties for months.

By Wednesday, there's another one.

Another ground-floor rethink. The thing you just finished absorbing is already the old model, and the new one requires you to start over. The realization you had on Sunday is now just the setup, the prerequisite, the thing you need to already understand so you can process what happened this morning.

That feeling has a specific texture. It's not anxiety exactly, and it's not excitement. It's the cognitive equivalent of never finishing a sentence because someone keeps changing the subject before you get to the verb. You're always mid-thought. Always catching up to the version of reality that was current an hour ago. Daniel Kahneman mapped how the brain toggles between fast, intuitive processing and slow, deliberate reasoning. What's happening now is a sustained demand for the slow kind with no recovery time between rounds.

And because this experience is genuinely unpleasant, because the sustained cognitive load of continuous reframing drains you without a clear payoff or endpoint, a lot of people are going to opt out. They'll be the ones who correctly identify that keeping up at this pace is unsustainable and make the rational decision to stop trying. The brain has a self-preservation instinct, and one of its oldest tricks is to stop paying attention to things that cause distress without offering resolution.

In 2013, Douglas Rushkoff called this shift Present Shock, the collapse of narrative time into an always-on now. He was diagnosing the smartphone era. What's different in 2026 is that the present itself keeps overwriting. It's not just that everything happens now; what "now" means changes before you've finished processing the last version.

Newport's framing will look more right every quarter. The technology won't have slowed down; the median will have just stopped watching.

The Compound Interest of Not Keeping Up

Before talking about solutions, it's worth understanding why opting out compounds.

When someone stops processing AI developments for a month, they don't just miss a month of news. They miss the foundational understanding required to process the next month of news. The gap is multiplicative. Every month you're out, the on-ramp gets steeper. Three months away and you're not behind; you're in a different conversation entirely.

This is how future shock becomes a societal divide rather than a personal inconvenience. A gradient, with people processing in real-time at the frontier on one end, and people who haven't noticed there's something to check out of on the other. That largest group is the one Newport is correctly measuring: the median. It just hasn't shifted yet.

When it does shift, it'll shift fast. The people who've been building their understanding week by week will navigate it. The people who haven't will face the accumulated weight of every conceptual overhaul they skipped, all at once.

What Toffler Got Right (And What He Couldn't Have Imagined)

Toffler proposed solutions, and some of them were ahead of their time. Some weren't.

His biggest idea was "stability zones," deliberately maintained areas of your life that don't change, even as everything around them does. Keep the same home, the same relationships, the same rituals. The human nervous system needs anchors. You can handle novelty in your work if your kitchen table is in the same place it was yesterday. The unchanging parts give you the cognitive surplus to process the changing ones.

In 2026, the people I know who are thriving in the AI space almost universally have something in their lives that doesn't move. A sport they've played for decades. A weekly dinner with the same people. A morning routine that predates smartphones. Cognitive load management, disguised as habit.

Toffler also pushed for future-oriented education, technology assessment boards to slow deployment of innovations society wasn't ready for, and "enclaves of the past," communities where things moved slower by design. The assessment boards resemble what the EU is attempting with the AI Act, and the enclaves have their echo in the cottagecore movement that spread across TikTok and Instagram during the pandemic. But his assumption that institutions could govern the pace of change turned out to be the weakest part of the thesis. The pace didn't wait for the institutions.

There's one coping mechanism emerging in 2026, though, that Toffler never imagined, because the technology to enable it didn't exist.

Representative cognition.

When a domain gets too complex for one person to track, you delegate to someone who can. We've always done this. That's what advisors are for, what therapists are for, what the friend at the bar after a bad day is for. You outsource the processing of difficult information to a trusted party who can help you make sense of it.

What's new is the fidelity. A friend gives you vibes and empathy. A system that's processed fifty hours of research you haven't had time to touch and knows the difference between a hype cycle and a genuine shift can extend your judgment into territory your attention can't reach. Whether that's an AI agent, a curated briefing, or a trusted analyst with better bandwidth than yours, the pattern is the same: you're outsourcing comprehension, not just information retrieval.

If these ground-floor rethinks are arriving faster than humans can absorb them, people will delegate cognitive labor. They already are, even if they don't frame it that way. The question is whether the systems they delegate to will be trustworthy enough to carry the weight. Whether "I haven't processed the last three breakthroughs, but someone I trust has, and they can brief me when I'm ready" becomes a viable way to stay in the game without burning out.

The harder question is verification. When you've delegated the processing to a system, how do you check its work? The delegate's value comes from handling complexity you can't handle yourself. At some point, trust in the delegate is the position. That's uncomfortable, and it should be.

I don't know the answer. I'm not sure anyone does yet. But I think Toffler would have found the question fascinating. He spent his career arguing that humanity's biggest challenge was the speed at which the future arrived. The idea that the technology causing the shock might also be the shock absorber has a symmetry he would have appreciated.

Toffler's book was a warning about speed. This is that warning, compounded.

I named this website after Toffler's concept because it felt like the right frame for what is happening in the world today. One way I process things that feel big and complicated is to write songs. The process of turning an idea into melody and lyrics forces me to be intentional and internalize the emotion of these complex topics. My hope is that emotion translates into the songs so that others can identify with feelings evoked from the words and music. Last year I started releasing music via my music project Solypsizm. It has been a fulfilling experience and I encourage anyone struggling with the pace of change in society these days to try using art as an outlet for creativity and processing complex thoughts and ideas.

Today I released the eponymous song "Future Shock" to all major distribution platforms. Please check it out and have a listen:

Also available on Spotify and Apple Music.

You can learn more about Solypsizm, previous song releases, and how to follow my music project on social media at solypsizm.com.