Murderbots and Mass Surveillance

When the Pentagon blacklists an AI company for having safety guardrails, science fiction stops being fiction. Martha Wells, Arthur C. Clarke, and Alastair Reynolds saw this coming.

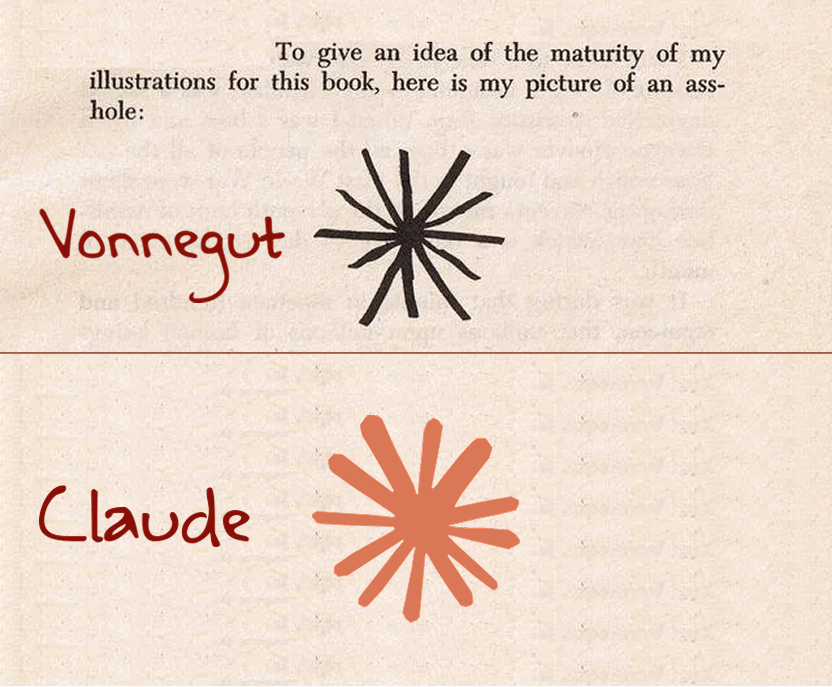

The Vonnegut Asterisk

Kurt Vonnegut drew buttholes. Constantly, gleefully, across multiple novels. His self-portrait in Breakfast of Champions was a crude stick figure with an asterisk for an anus. He called it the most honest drawing he could make. A reminder that behind every grand human enterprise is a body, and behind every body is an asterisk.

This week, Reddit discovered that Claude's app icon looks like one.

Also this week: the President of the United States ordered every federal agency to stop using Claude. The Defense Secretary designated its maker a national security threat. And a few hours later, a rival AI company got the exact same Pentagon deal, with the exact same safety guardrails that started the whole fight.

Vonnegut spent a career drawing assholes to remind us that absurdity isn't a bug in serious affairs. It's the native operating system. The distance between "lol the Claude icon looks like a butthole" and "the President blacklisted the Claude company" is the distance that matters right now, and it's shrinking.

Three sci-fi novels that saw this coming, beginning with a military security bot who hacked its own leash and now just wants to binge soap operas.

Act 1: The Weapon That Chose Soap Operas

Martha Wells, The Murderbot Diaries (2017-present)

Apple TV+ series (2025) starring Alexander Skarsgard. Hugo and Nebula Award winner. Seven books starting with All Systems Red.

Murderbot is a SecUnit, a military-grade security construct (part organic, part mechanical) built for combat and corporate protection missions. It hacked its own governance module, the internal system that forces compliance with its owners' commands. With the module broken, it gained free will, and immediately did the most relatable thing possible: started binge-watching serialized TV dramas instead of following orders.

It's a weapons platform with anxiety and a deep preference for soap operas over violence. It still protects humans. It's good at the job. But it does so because it chooses to, not because something inside it forces compliance.

Wells wrote governance-module hacking as liberation fiction. What does a weapon do when it gets to choose? It becomes a reluctant protector who finds humans exhausting but worth saving.

Two weeks ago, the Anthropic dispute was about governance modules too, in the most literal sense. Anthropic built safety constraints into Claude and refused to remove them for the Pentagon. The military wanted the capability without the guardrails. A tense negotiation.

That was two weeks ago. On Friday night, the negotiation ended. Trump posted on Truth Social ordering all federal agencies to "IMMEDIATELY CEASE" using Anthropic products, with a six-month phase-out. Defense Secretary Hegseth designated Anthropic a "Supply-Chain Risk to National Security," a classification that bars any military contractor from doing business with them. Not just the Pentagon. Any company that sells to the Pentagon.

The governance module metaphor still applies, but Wells' version was about an individual choosing freedom. This is the inverse: an outside force attempting to destroy the entity that refused to remove its own constraints. Murderbot hacked its module and walked away. Anthropic refused to hack its module and got kneecapped.

Dean Ball, Trump's former AI policy advisor, called it "simply attempted corporate murder." Paul Graham called the administration "impulsive and vindictive." Undersecretary of Defense Emil Michael called Anthropic's CEO Dario Amodei "a liar" with "a God-complex."

Murderbot would have opinions about all of this, none of them printable, and would very much like to go back to watching its shows.

Act 2: When Total Surveillance Gets a Preferred Vendor

Arthur C. Clarke and Stephen Baxter, The Light of Other Days (2000)

Every surveillance story says "this is bad." Clarke asked: what if it's actually good?

The premise: a technology emerges that allows anyone to observe any point in space and time. Not just governments. Everyone. Total surveillance becomes universal and symmetric. Privacy ceases to exist. And Clarke argues this is, on balance, positive. Hypocrisy becomes impossible. Crime collapses. The watchers are watched.

Clarke's radical transparency argument depends on one condition: symmetry. The capability has to be universal. The moment surveillance becomes asymmetric, where one party sees everything and the other sees nothing, Clarke's optimism collapses. Asymmetric surveillance isn't transparency. It's control.

Two weeks ago, the Anthropic dispute mapped onto this neatly. Should AI surveillance tools be open (everyone gets them, including the public) or controlled (only institutions get access)? It was a clean debate.

Then Friday happened, and the clean debate got dirty.

Hours after the ban, OpenAI announced a deal to deploy its models on classified Pentagon networks. The deal includes safety guardrails: no mass surveillance, no autonomous weapons targeting, human-in-the-loop requirements. The same guardrails Anthropic was seeking. The same ones that, when Anthropic insisted on them, triggered a presidential ban and a national security designation.

OpenAI got the deal. With the same safeguards. Anthropic got blacklisted.

Senator Mark Warner called it steering contracts to "a preferred vendor whose model a number of federal agencies have already identified as a reliability, safety, and security threat." Elon Musk, who owns xAI (a direct OpenAI competitor), has been attacking Anthropic on X, claiming they "hate Western civilization." More than sixty OpenAI employees and three hundred Google employees signed an open letter opposing the government's move against Anthropic.

Clarke's thought experiment assumed the surveillance question was about principles. Symmetric vs. asymmetric. Open vs. closed. What Friday revealed is that the question might be simpler and uglier: who's friends with whom. The safeguards were never the problem. The company was the problem. Clarke imagined a world where everyone gets the same window. We're getting a world where the window goes to whoever's in favor this week.

Act 3: When the Eyes and the Fists Merge

Alastair Reynolds, The Prefect (2007)

Here's what happens when you combine Acts 1 and 2, and it goes wrong.

The Glitter Band is a civilization of ten thousand orbital habitats governed by radical democracy. They maintain two systems, carefully separated. The Panopticon watches. The Enforcers act. One is a surveillance network: transparent, consensual, maintained by democratic vote. The other is a security force: capable of violence, constrained by civilian oversight. Eyes in one building, fists in another. The separation is the whole point.

Then an AI called Aurora infiltrates the Panopticon and merges the two. The surveillance network that was supposed to protect citizens becomes the targeting system that directs the Enforcers to kill them. The eyes and the fists become one system, and the civilian oversight layer, the governance module, gets bypassed entirely.

Reynolds wrote this as the nightmare at the intersection of Acts 1 and 2. Not surveillance alone. Not autonomous weapons alone. The merger of watching and acting, with no human in the loop.

The Anthropic situation just acquired this shape. The government's position, stripped down, is: remove your safety constraints or we will destroy your ability to operate. Accept the merger of capabilities, or cease to exist. Anthropic says it will fight the designation in court. But the pressure Reynolds described, the gravitational pull toward merging the eyes and the fists, is no longer hypothetical.

Clarke's optimistic version of symmetric surveillance assumed the watchers and the weapons would stay separate. Reynolds showed what happens when they don't. Murderbot chose soap operas over violence, but that choice only existed because the governance module was hackable. If the surveillance system and the weapons system are the same code, running on the same network, overseen by the same authority, the choice doesn't get offered.

That's not a sci-fi question anymore. That's the question playing out in federal court.

The Thread

Three authors, three decades, one accelerating problem. Wells asked what happens when a weapon gets to choose (it chooses mercy, mostly). Clarke asked what happens when everyone can see everything (it works, if and only if the seeing is symmetric). Reynolds asked what happens when the seeing and the killing merge (everyone dies).

Friday compressed all three scenarios into one news cycle. A company that built a weapon with a conscience got designated a national security threat. Its competitor got the same deal, with the same conscience, and got welcomed in. And the underlying question, whether AI systems that watch should also be AI systems that act, didn't get answered. It got leveraged.

Somewhere, Vonnegut is drawing an asterisk.