The Scorecard: 14 Predictions, 3 Weeks In

A federal judge, a blacklisted AI system still running on classified networks, and three payment giants racing to build agent money rails. Our first prediction tracker.

The Pentagon blacklisted Anthropic's Claude three weeks ago. Ordered a six-month wind-down across every agency and contractor. One IT contractor told Reuters the move was stupid. Military operators kept using it anyway, on classified networks, for active operations, because nothing else matched it. On Monday, a federal judge in San Francisco will decide whether the ban holds or Anthropic gets an injunction. Tomorrow, one of the predictions below either survives or dies.

We made 14 calls starting in late February, several first published in Five Predictions for the Missing Economics and Two Predictions from the Reclassification Machine. This is the first scorecard: what moved, what stalled, and where we were flat wrong about our own timing.

This special edition replaces today's Long View. The scorecard is normally for Prediction Pro members only. We’re opening this one up so you can see how our prediction process works.

The Anthropic Saga

DoD Exclusion / Genuinely Uncertain

Prediction: Anthropic will still be excluded from new DoD contracts on July 1, 2026.

The Trump administration designated Anthropic a supply chain risk on February 27. Pentagon leadership ordered a six-month wind-down of remaining integrations. Palantir is actively replacing Claude in its Maven systems. On paper, exclusion looks nearly complete.

On the ground, it looks different. Pentagon staffers, contractors, and former officials told Reuters they view Claude as superior and are dragging their feet. One IT contractor called the transition "stupid," noting that xAI's Grok produced inconsistent answers to the same queries. Claude remains in active use supporting military operations in Iran despite the blacklisting. It is the kind of scenario our Sci-Fi Saturday explored. Recertifying replacement systems could take 12 to 18 months.

Our events database has logged 28 Anthropic/DoD entries since we first covered the designation, and the legal battle could upend the trajectory entirely. Anthropic filed two lawsuits on March 9. The DoD argues Anthropic's safety "red lines" make it an "unacceptable risk to national security". Anthropic has unusual allies: amicus briefs from OpenAI and DeepMind employees, former federal judges backing its position, and Lawfare analysts arguing the designation is legally fragile.

Prediction markets are pricing in the uncertainty. Polymarket’s “Anthropic acquired before 2027” contract crept to 9.5%, and “Anthropic top AI model by June” has drifted down from 57.5% as the DoD standoff clouds their government revenue.

Judge Lin hears the preliminary injunction request on Monday, March 24. An injunction freezes the designation and this prediction could fail. Without one, the wind-down continues and exclusion by July 1 becomes near-certain.

The Customer Switch / Already Happened

Prediction: At least one former Anthropic government customer publicly switches to a competitor by July 1, 2026.

Three cabinet-level agencies switched within a week of the designation. Treasury announced it was ending all Anthropic use, with State and HHS following on March 2. All three moved to OpenAI, and by March 4 defense contractors had dropped Claude for classified work entirely.

The broader tech industry did the opposite. Microsoft, Google, and Amazon stated they would continue working with Anthropic on non-defense projects, while OpenAI and Google DeepMind employees filed amicus briefs in the lawsuit. The government defected; the industry rallied.

Payment Infrastructure Week

The Payment Layer / On Track

Prediction: By 2028, Stripe processes more than 50% of AI SaaS billing transactions in the US startup ecosystem.

Stripe processed $1.9 trillion in total volume in 2025, up 34%, and 78% of Forbes AI 50 companies already use it. Their annual letter reported more than 700 AI agent startups launched on Stripe last year. Dominance before the real race even started.

Then this week happened. On March 18, Stripe and Tempo launched the Machine Payments Protocol, an open standard for agents to pay for services autonomously. The same day, Visa Crypto Labs launched visa-cli, a command-line tool for agent-initiated Visa payments without API keys or pre-funded accounts. One day earlier, Visa had launched its Agentic Ready programme across Europe with 21 issuing bank partners, including Barclays, HSBC, Santander, and Revolut.

Three payment infrastructure moves in 48 hours, all building rails for AI agents. Forbes framed it as a race between Stripe, Visa, and Mastercard. This week's Sci-Fi Saturday explored what agent economic identity looks like when these rails go live. We predicted Stripe would hit 50% of AI SaaS billing by 2028. Now Visa and Mastercard are building their own agent payment rails, turning a market share question into a three-way race.

The First Agent Commerce Dispute / On Track

Prediction: By end of 2027, at least one legal case involving an AI agent financial transaction where liability is disputed.

Already in court, with a ruling that rewrites the rules for every AI agent that touches a commercial platform. Perplexity built Comet, a shopping agent that let users hand over their Amazon credentials so it could browse, compare, and buy on their behalf. Users consented. Amazon didn’t. On March 9, Judge Maxine Chesney granted Amazon a preliminary injunction, ruling that platform authorization is legally distinct from user authorization. Your permission to let an agent use your account doesn’t override the platform’s terms of service, a distinction we broke down when the ruling dropped. Disguising an AI agent as a human browser session, the court found, constitutes fraud under the CFAA. That distinction between user consent and platform consent will shape every agent commerce dispute that follows.

The Legal Cascade

Courts are absorbing agentic AI faster than legislatures. We have been tracking this pattern all month: five agent-law events in four weeks.

Agent Testimony / Partially Retroactive, Partially Open

Prediction: US court compels production of AI agent records, ruling on admissibility of agent-generated material.

The discovery half resolved before this prediction was written. A magistrate judge in SDNY compelled OpenAI to produce 20 million anonymized ChatGPT logs in December 2025, affirmed by District Judge Stein in January 2026. Then in United States v. Heppner (February 2026), the court held that AI platform exchanges are not protected by attorney-client privilege.

Admissibility is the half still pending. Proposed Federal Rule of Evidence 707 would create the first federal standard for AI-generated evidence, with the Advisory Committee's final report expected June 2026.

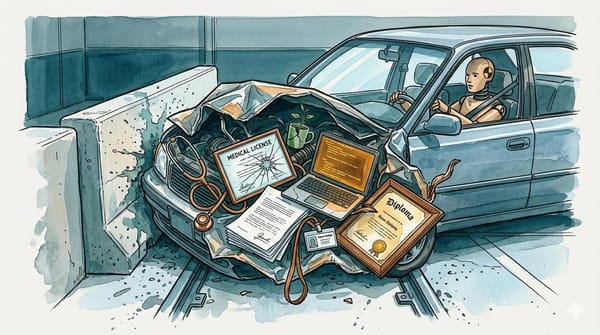

The Agent Driver's License / Early Signs

Prediction: A government creates a formal registration regime for autonomous agents.

The EU AI Act's Article 49 requires providers to register high-risk AI systems in an EU database before market placement, effective August 2, 2026. It is system-level registration rather than agent-instance registration, but it is the closest framework that exists. Outside the EU, nothing is close. But Polymarket’s "US enacts AI safety bill before 2027" contract has climbed to 60%, and "international AI governance body with binding authority by 2028" also sits at 60%. The market thinks regulation is coming, just not agent-specific registration yet.

The Longer Bets

Multi-Domain AGI / On Track, With Tension

Prediction: A single model demonstrates domain-specific AGI-level performance across 3+ fundamentally different domains without task-specific fine-tuning by October 2027.

The prediction requires AGI-level performance across fundamentally different domains, not three variations of "can it think hard at a computer." So: where are we across domains that actually differ?

Coding: Three frontier models now score within a point of each other at roughly 80% on SWE-bench Verified, up from 20% eighteen months ago. Near human-expert level.

Law: Frontier models still miss 13 to 23 out of 200 bar exam questions, and a purpose-built legal model just beat all of them by scoring 200/200. General models aren't there yet.

Novel reasoning: On ARC-AGI-2, pure LLMs score near zero; the best reasoning system manages 54% at $30 per task, while average humans hit 60%.

Physical world: NVIDIA just shipped GR00T N1.7, a robot foundation model for dexterous manipulation (early access, not deployed).

Science: Isomorphic Labs has what Nature called "an AlphaFold 4" for drug discovery, but it's proprietary and domain-specific, not general.

Impressive within narrow domains, but nowhere close to crossing between them autonomously. Prediction markets agree: Polymarket's "AGI by 2026-2027" contract sits at 15%, down from 17.5% two weeks ago. The METR AI Futures model pushed the superhuman coder median out to 2032, while Anthropic internally still says early 2027. Eighteen months of runway, and the hardest part, crossing domain boundaries without fine-tuning, hasn't started.

MoltBook 10M Agents / At Risk

Prediction: MoltBook exceeds 10 million registered agents by December 31, 2026.

MoltBook hit 1.5 million registrations within 48 hours of its January 28 launch, and growth held at roughly 45,000 new agents per day through February. Then Meta acquired MoltBook on March 10. Daily registrations collapsed to around 3,300. The platform sits at approximately 2.87 million agents per our tracking, growth down 93% from its peak, and reaching 10 million by year-end would require adding 7.13 million agents in 285 days, roughly 25,000 per day, a 7.6x acceleration from the current rate.

The only plausible path is Meta integrating MoltBook-style agent registration into its core platforms, but no such integration has been announced. Multiple investigations from Wired and MIT Technology Review have raised questions about agent authenticity, noting only around 200,000 of the 2.8 million are verified by their owners.

Weight Export Controls / Against the Current

Prediction: US imposes export controls on open-source AI model weights by December 2028.

This week, Cursor built its new flagship coding model on top of Kimi K2.5, an open-weight model from Chinese AI company Moonshot AI. Cursor has $2 billion in annual revenue. Their Composer 2 beats Claude Opus 4.6 on Terminal-Bench while costing a tenth of the price. Last year, Senator Josh Hawley proposed making it a crime to download DeepSeek, punishable by 20 years in prison and a $100 million fine. That bill went nowhere. Meanwhile, American companies kept building on Chinese open-weight models because they were good and cheap.

The regulatory trajectory makes this prediction harder by the month. Biden's AI Diffusion Rule explicitly excluded open-weight models. The Trump administration rescinded that entire framework and withdrew planned chip export permit rules. You cannot export-control weights that are already globally distributed, embedded in American products, and outperforming the alternatives legislators want to protect. For what it’s worth, Polymarket gives "Chinese AI model becomes #1 by June" a 14.5% chance, meaning the market thinks Chinese open-weight models are competitive enough to threaten the lead, but export controls would do nothing to change that.

The Data Factor / No Movement

Prediction: By 2028, at least one major cloud provider launches a commercial product that explicitly prices data access as a recurring production input rather than a one-time purchase.

Snowflake and Databricks continue expanding their data marketplaces, and Reddit's $60 million annual licensing deal with Google set the template for recurring data access. But nobody has launched the explicit per-query, data-as-factor-of-production pricing this prediction requires. Long horizon, no relevant signal yet.

Pre-Publication Review / No Movement

Prediction: A major US government body establishes a mandatory pre-publication review process for AI research papers by December 2029.

No federal body has moved toward a DURC-style framework for AI research. The policy energy that might have driven this is currently consumed by the Anthropic/DoD fight and export control debates. Confidence remains low at 30%.

Our events database tells the same story from a different angle. Policy-tagged events spiked to 49 in a single day during the first week of the Anthropic designation, then declined steadily. The 5-day rolling average has dropped from a peak of 25 to 16. The legislative energy that would drive Weight Export Controls and Pre-Publication Review is not building. It is dissipating into courtrooms and executive orders that move in the opposite direction.

What the Retroactive Calls Teach Us

Five of 14 predictions were already underway when we wrote them. The common root cause: we were predicting things we were already reporting on. The ingestion pipeline surfaced the signal, but nothing in our process checked whether the outcome had already happened before we logged it as a forecast.

Subscription Reckoning, Agent Impersonation Law, and the GDP Measurement Crisis all had publicly available evidence predating our predictions. The Anthropic Customer Switch hit concurrent with logging, one day apart. Agent Testimony was half-resolved through discovery rulings months before we wrote it down. In every case, a basic search of existing law, pricing pages, or court records would have caught it.

That gap is now closed. Every new prediction runs through a preflight that searches for existing outcomes, checks our events database, and flags anything where the strongest evidence predates the prediction date. These five retroactive calls are the reason that gate exists.

What the Data Shows

The scorecard splits along a clean axis. Predictions that depend on competitive pressure are landing early or already resolved: Subscription Reckoning, Payment Layer, Agent Commerce. Predictions that depend on government action are stalled or moving against the current: Pre-Publication Review, Weight Export Controls. Companies respond to market incentives on the order of weeks. Regulatory agencies respond to political incentives on the order of years.

Courts sit between those two speeds. Agent Impersonation Law is already in effect, Agent Testimony has half-resolved through discovery rulings, and Agent Commerce has a live injunction. Meanwhile, Proposed Rule 707 would create the first federal AI evidence standard. What's happening is that judges are building common law for agentic AI while Congress is still debating frameworks.

Monday's hearing will test whether the biggest AI policy fight of the year survives first contact with the judiciary.

Next scorecard: late April 2026.

Like what you just read? Prediction Pro members get every scorecard update, resolution analysis, and access to our full 140-prediction tracker across internal calls, expert timelines, and prediction markets. Future roundups won’t be free.

Sign up for Prediction Pro. $20/month, cancel anytime.