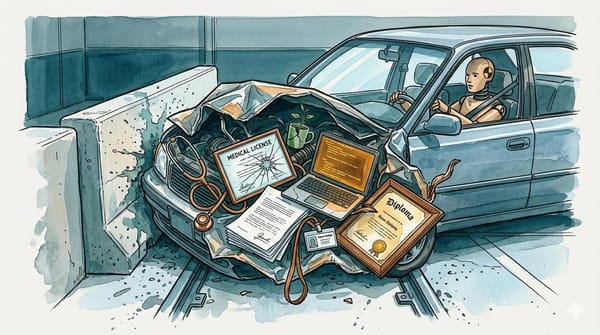

Promise Nothing, Claim Everything

A 59-question comparison of AI terms of service found six competing companies converged on the same answer: no warranties, no liability, and your data used for training.

A team of researchers at Trinity College Dublin and Hugging Face, an AI research company and open-source model platform, set out to map the differences between AI companies' terms of service. They built a 59-question codebook (a structured scoring rubric that defines precisely how to evaluate each clause), trained human reviewers to apply it consistently, and turned them loose on the consumer-facing terms of Claude, ChatGPT, Gemini, Copilot, Le Chat (Mistral's consumer chatbot), and DeepSeek. (A note for builders: the study covers consumer terms of service only. Enterprise and API agreements often carry different data training defaults, liability frameworks, and sometimes actual service level agreements.) The terms were archived on November 6, 2025. The resulting paper, submitted to the Association for Computing Machinery (ACM) Conference on Fairness, Accountability, and Transparency (FAccT) 2026 and authored by Harshvardhan J. Pandit, a data privacy researcher at Trinity College Dublin, Dick A. H. Blankvoort, Adel Shaaban, Sasha Luccioni, an AI ethics researcher at Hugging Face, and Abeba Birhane, a cognitive scientist at Trinity College Dublin, expected to find variation. They found near-uniformity.

The Symmetry

The findings, laid out across a systematic comparison framework drawn from EU consumer protection law, were uniform to a degree the researchers themselves found striking.

None of the six define quality standards for outputs. All six disclaim accuracy and fitness for any particular purpose. All six place liability on the user. All six train on user data by default. Five of six prohibit using outputs to train competing models. Across the codebook's 59 questions, the answers were the same regardless of which company wrote the contract or whether the user was paying for the service.

Users paying $20 a month for ChatGPT Plus or $20 for Claude Pro might reasonably expect that a paid subscription buys stronger legal protections: clearer warranties, some liability coverage, or a commitment not to train on their data. It does not. Across all six services, paid and free users operate under the same terms. The subscription fee buys faster response times, priority access to the company's servers during peak demand. The contractual terms are identical.

The Question

Anthropic and OpenAI poach each other's researchers. Google and Microsoft race on enterprise deals. Mistral positions itself as the European alternative to American incumbents. DeepSeek emerged from High-Flyer Capital Management, a Chinese quantitative trading firm, to undercut everyone on cost. They compete on every visible axis. So why did they all write the same contract?

Three Theories

Three explanations show up in the paper and the broader legal literature, none mutually exclusive.

The template theory is the simplest. Law firms recycle boilerplate. The first major AI company to hire a technology attorney got a set of terms derived from decades of software licensing, and the rest copied the homework. Legal language propagates through industries the way code dependencies do; the same "AS IS" disclaimers appear in enterprise software-as-a-service contracts, mobile app license agreements, and AI chatbot terms. There is a version of the AI industry where six general counsels independently googled "AI terms of service template" and converged on the same three blog posts from the same two law firms. The problem with this theory is scope. It requires Mistral's lawyers in Paris and DeepSeek's counsel in Hangzhou to be working from the same American playbook. Cross-border legal transplantation happens, but it does not typically produce clause-for-clause identity across six jurisdictions.

The rational floor theory comes from game theory. In a competitive market, consumer protection is a first-mover disadvantage. If Anthropic offered a warranty on Claude's outputs, it would bear costs its competitors do not. If OpenAI guaranteed accuracy, it would expose itself to litigation the others avoid. Every company independently calculates the least generous terms the market will accept. Since no company faces meaningful consequences for offering less, they converge on the floor. No coordination required. The Nash equilibrium, the point where no player benefits from unilaterally changing strategy, is "disclaim everything." When every competitor disclaims everything, no one gains by offering more.

The inheritance theory places AI terms in a longer tradition. Software has shipped "AS IS" since the 1980s. The Uniform Commercial Code, the US body of law governing commercial transactions, and its warranty provisions were designed for physical goods; software vendors argued successfully that code was too complex and too dependent on user behavior to warrant the same protections. Four decades later, the doctrine is load-bearing infrastructure. It runs underneath every layer of commercial software, and now AI systems that generate text indistinguishable from professional work product. AI companies inherited that legal architecture wholesale, but the fit is worse than it looks. A word processor crashing is an inconvenience. An AI system generating fabricated legal citations that a lawyer submits to a court, producing medical advice that a patient follows, or writing defamatory content that gets published under a human byline carries consequences the original "AS IS" framework never contemplated.

The Tells

The six companies diverged in four places.

DeepSeek is the lone exception on output training rights. Its terms of use permit users to train their own models on DeepSeek-generated outputs. No other service allows this. For a company competing primarily on cost and openness, this is a competitive differentiator, not a concession.

Anthropic went further than the template on data collection. Its current privacy policy specifies that clicking a thumbs-up or thumbs-down on a response re-enrolls that conversation in training data, even if the user had previously opted out of contributing to model training. A user who navigated Anthropic's settings and explicitly disabled training data contribution has their preference overridden by a single click on a UI element that looks like a quality feedback button. The feedback mechanism, presented as a tool for improving responses, functions as a consent override — a dark pattern that converts routine user behavior into a data collection event.

Mistral's terms include a clause prohibiting users from publicly reporting safety incidents without prior written consent. This is not a standard confidentiality provision. The EU AI Act's Article 62 establishes incident-reporting obligations designed to ensure that safety failures in high-risk AI systems reach regulators and the public. A contractual gag clause on safety reporting sits in direct tension with that framework. Mistral is also the only service that explicitly references the General Data Protection Regulation and the AI Act in its consumer terms, which means its lawyers are aware of the regulatory landscape they may be contradicting.

Microsoft's Copilot terms state explicitly that the service "may include advertising." Among the six services studied, it is the only one that explicitly reserves the right to use conversational data for ad targeting.

In Practice

Terms of service are only as meaningful as their enforcement.

Anthropic disabled approximately 1.45 million accounts across Claude products in the second half of 2025. In January 2026, the company tightened safeguards against third-party harnesses spoofing Claude Code, auto-banning accounts that triggered abuse filters. Some of those bans were mistakes, affecting legitimate users, and had to be reversed. The terms give the company unilateral authority to terminate access at any time. Users whose accounts are disabled have no equivalent mechanism to hold the company accountable when the service underperforms.

The output-training restriction tells a different story. OpenAI's terms prohibit using outputs to develop competing models. When evidence emerged that DeepSeek employees had circumvented access restrictions to distill OpenAI's models (training DeepSeek's own AI on OpenAI's outputs to extract the larger model's knowledge), OpenAI did not file a breach-of-contract lawsuit. Instead, it sent a memo to the U.S. House Select Committee on Strategic Competition. The same provision that applies to individual users was invoked through Congress when a competitor crossed it.

No user has successfully sued any of the six for output quality, data misuse, or the blanket liability exclusions the terms impose.

The Test

The paper was submitted as a related contribution to the European Commission's Digital Fairness Act consultation, which closed in October 2025. (The paper's terms were archived in November 2025, after the formal consultation window, placing the submission as supplementary input rather than a response within the consultation period.) The authors, using the EU's Unfair Contract Terms Directive (UCTD) and Unfair Commercial Practices Directive as their analytical framework, identified three provisions across the six services that map onto terms flagged in the UCTD's indicative annex as potentially unfair. The annex is not prescriptive; it lists terms that member states may treat as unfair, not terms that are automatically void. But the three provisions the researchers flagged are not borderline cases. They include unilateral modification clauses that allow companies to change terms without meaningful notice, exclusive jurisdiction clauses that force disputes into the provider's home court (DeepSeek requires all disputes to be filed in Hangzhou, China, with no carve-out for European users), and blanket liability exclusions that may exceed what consumer protection law permits.

The regulatory ground is shifting at variable speed. The AI Act's transparency and accountability requirements are rolling out in phases through August 2026 and 2027. The Digital Fairness Act consultation is specifically examining whether existing consumer protection rules adequately cover AI services. The UCTD itself already provides enforcement mechanisms; the question has always been political will, not legal authority. The legal architecture these six companies built may have worked in 2024 and 2025, when enforcement bodies were still learning the terminology.

Prior work mapped parts of this territory. A Stanford Human-Centered AI Institute study in October 2025 highlighted privacy risks across chatbot platforms, and a ranking by privacy service Incogni in June 2025 scored the same services on data practices. What the Trinity College Dublin and Hugging Face team added was the systematic codebook approach, turning what had been impressionistic comparisons into a structured, replicable analysis.

The Equilibrium

Six companies in three countries, operating under different legal systems, converged on functionally identical terms for the part of the business that users interact with least: the contract.

This is an equilibrium, the stable outcome of rational actors operating without constraint. Every company independently determined that the optimal strategy is to promise nothing and claim everything, and none of them found a reason to deviate.

---The paper "Terms of (Ab)Use: An Analysis of GenAI Services" by Pandit, Blankvoort, Shaaban, Luccioni, and Birhane has been submitted to FAccT 2026. Project page: aial.ie/research/terms-of-abuse.