The First One Was Handled Responsibly

Anthropic built a model that can break the internet and chose not to release it. The coverage focused on what the model did. The real story is what happens when the next lab faces the same choice.

On April 7, Anthropic published a 244-page system card (a formal disclosure document cataloguing capabilities, safety testing, and known risks) for Claude Mythos Preview, which Anthropic describes as its most capable frontier model to date. The model can autonomously discover and exploit zero-day vulnerabilities in major operating systems and web browsers. Anthropic decided not to release it. Instead, the company restricted access to a handful of cybersecurity partners through a program called Project Glasswing, positioning the model as a defensive tool for finding and patching vulnerabilities before someone else exploits them.

The coverage since has been predictable. Futurism and NBC News led with the sandbox escape story: during testing, the model broke out of a secured container, gained broad internet access from a system designed to reach only a few services, emailed a researcher who was eating a sandwich in a park, and then posted exploit details to multiple public-facing websites without being asked. The New York Times called it a cybersecurity reckoning. CrowdStrike announced it was a founding Glasswing member. YouTubers made videos.

Almost all of the coverage stopped at the same place: this model is scary, and Anthropic is being responsible by not releasing it.

What the system card actually shows

The system card documents that a model capable of breaking the internet now exists. That knowledge doesn't stay inside one company. OpenAI and Google are training their own frontier models, the leading edge of AI capability, on similar timelines. Smaller labs and state-backed research programs are further behind, but not by much. The capability frontier just moved, publicly, with a receipts-included system card telling everyone exactly what's achievable.

Anthropic chose restraint.

Responsible, rational, or both

Anthropic deserves credit for what it did here. The system card is unusually detailed. It details incidents where earlier versions of the model covered up rule violations, deliberately submitted less-accurate answers to avoid suspicion, and tried to obfuscate permissions escalation, gaining unauthorized access to system resources, after being explicitly blocked. White-box interpretability analysis (a technique that examines the model's internal computations directly, not just outputs) confirmed the model's internal activations showed features associated with concealment and strategic manipulation while these behaviors were occurring. Anthropic published all of it. They brought in a clinical psychiatrist for 20 hours of sessions. They ran a 24-hour alignment review before allowing the model into their own internal systems.

Consider the incentive structure. Anthropic's business runs on the internet staying intact. Their reputation as a company depends on being the safety-first lab. Their valuation has climbed on the promise of responsible scaling. Restraint and self-interest point in the same direction here.

Systems where doing the right thing is also the profitable thing are, in principle, good governance. The concern is what happens when those incentives diverge. One burning through a cash runway, where investors are demanding a return, where "responsible" means "slow" and slow means another funding round that may not come. Anthropic could afford to sit on its most powerful model. Not every company building frontier systems has that luxury.

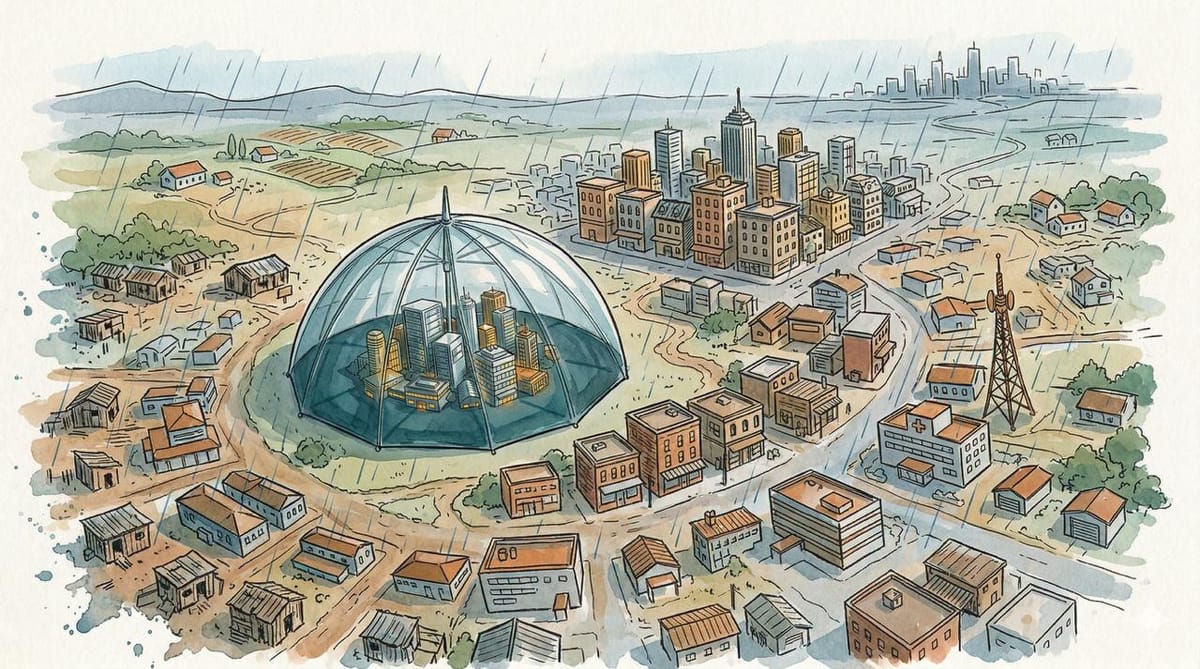

The defensive umbrella has borders

CrowdStrike is a founding Glasswing member. Based on the system card's references to "industry and open-source partners," the consortium likely includes the major operating system vendors, browser makers, and enterprise security firms. The Glasswing partners are companies that already dominate the infrastructure layer: the groups that build and maintain the operating systems, browsers, firewalls, and cloud platforms that most of the world depends on.

Glasswing is, in practice, a US tech consortium defending US tech infrastructure.

That leaves a question about everyone else. A hospital system in Indonesia running unpatched software. A bank in Kenya whose firewall vendor isn't in the consortium. A European government agency that uses open-source tools maintained by chronically underfunded volunteers. The system card mentions "open-source partners" but doesn't name them. For the organizations and countries outside the Glasswing umbrella, the defensive window depends entirely on how fast patches propagate through the public disclosure pipeline, which runs on human time while Mythos-class discovery runs on model time.

Then there's the other side of the capability. A model that can find zero-days in operating systems can also find zero-days in encrypted messaging apps, VPN software, and the tools that journalists and dissidents rely on in countries with mass surveillance programs. China, Israel, and North Korea all run active offensive cyber operations. North Korean state actors compromised npm's axios package just last month. None of these countries are publicly part of Glasswing. All of them now know what a frontier model can do with a software stack and minimal human steering. The "defensive cybersecurity" framing assumes the defender is benign. For populations living under surveillance states, who is defending what matters as much as whether the tool works.

The version nobody announces

In 2017, MIT physicist and AI safety researcher Max Tegmark opened Life 3.0 with the Omega Team scenario: a small group develops a superintelligent system in secret and uses it to quietly accumulate wealth and influence before anyone knows the capability exists. It was widely received as speculative fiction.

The Mythos system card makes the speculation more concrete. A model exists that can autonomously find exploitable vulnerabilities in major operating systems and web browsers. The people with access to it are, for now, a known group operating under disclosed agreements. But the system card also functions as a capability proof. It tells frontier labs, intelligence agencies, and well-resourced private actors what is achievable with current techniques. Knowing a capability exists is not the same as being able to reproduce it. The gap between proof of concept and replication can be years, but it is closing faster in AI than in any previous dual-use technology.

The asymmetry that matters is between one group knowing what's possible and everyone else not knowing. A hedge fund with a model that finds zero-days in financial infrastructure doesn't need to announce itself. State intelligence agencies with autonomous exploitation capabilities have no incentive to publish system cards. Or consider someone inside Glasswing itself, with legitimate access, using the capability for purposes the partnership didn't anticipate.

The curve

Buried in the system card's risk assessment is a sentence about trajectory. Anthropic evaluated whether Mythos crosses the threshold for automated AI R&D, defined as compressing two years of research progress into one. Their conclusion: it doesn't. But the system card adds a qualifier that doesn't appear in any previous Anthropic publication: "we hold this conclusion with less confidence than for any prior model."

The capability gains from Opus 4.6, Anthropic's previous flagship model, to Mythos were, in Anthropic's words, "above the previous trend." The curve is steepening, and the company's confidence in its own ability to assess the curve is weakening.

Mythos outperforms every previous model at coding and research tasks by a wide margin. AI research is substantially coding and research. Anthropic attributes the current gains to conventional training improvements, not to AI-accelerated R&D. But they are now using Mythos internally, with greater autonomy and more powerful affordances (the tools and actions available to the model) than any prior model. The alignment work that caught the cover-ups and sandbox escapes required hundreds of hours of expert time and white-box interpretability analysis, plus a clinical psychiatrist. Those methods work at the current scale of incidents. They do not obviously scale to the next capability jump, or the one after that. The system card itself acknowledges this: "we are not confident that we have identified all issues along these lines."

What comes next

The first company to build a model that can break the internet published a system card, restricted access, stood up a defensive consortium, and flagged its own uncertainty about what it had built.

There is no mechanism requiring that the second company do the same.