The Noise — March 20, 2026

ChatGPT was a search engine with better manners. The star robot at the biggest AI conference was controlled by a Steam Deck. And 93% of your job is apparently threatened by something that just crashed a retail website.

A dog owner told ChatGPT his pet had cancer, and within a week the internet decided AI had cured cancer. Meanwhile, Meta's cutting-edge AI agent crashed Meta's own internal systems, and NVIDIA brought out a walking robot snowman that turned out to be controlled with a video game controller. We're having a normal week.

ChatGPT Did Not Cure a Dog's Cancer

Here's the version of the story that went viral: An Australian tech entrepreneur named Paul Conyngham used ChatGPT to design a custom mRNA cancer vaccine for his dying dog Rosie. The tumors shrank. The dog is chasing rabbits in the park. AI just cured cancer.

Here's what actually happened. Conyngham used ChatGPT as a brainstorming tool. It suggested immunotherapy as an option and pointed him toward researchers at the University of New South Wales. Human scientists at UNSW genetically profiled Rosie's cancer. Pall Thordarson, director of the UNSW RNA Institute, helped build the mRNA vaccine. Human veterinarians administered it alongside a checkpoint inhibitor, a separate form of immunotherapy.

After the first injection, some of Rosie's tumors shrank. Others didn't respond at all. Conyngham himself told The Australian: "I'm under no illusion that this is a cure."

That didn't stop the headlines. Newsweek: "Owner With No Medical Background Invents Cure for Dog's Terminal Cancer." The New York Post: "Tech pro saves his dying dog by using ChatGPT to code a custom cancer vaccine." On social media, the story compressed to its most shareable form: ChatGPT cured cancer. No qualifications, no humans, no "some tumors didn't respond."

The Verge's Robert Hart untangled it this week. The vaccine was administered alongside a checkpoint inhibitor. You can't attribute the tumor shrinkage to the vaccine alone without a controlled trial, which this wasn't. It was one dog, one cocktail of treatments, and partial results. That's an interesting early case study, not a cure.

ChatGPT's actual contribution: it was a search engine with better manners. It pointed a motivated person toward an existing field of research. Human scientists did the rest. That's useful. It's just not "AI cured cancer," and the gap between those two things is where people get hurt.

Quick Hits

The star of NVIDIA's GTC keynote was a walking, talking Olaf robot from Frozen. It was also controlled with a Steam Deck. Jensen Huang's three-hour presentation included the expected Vera Rubin chips and "physical AI" proclamations. The crowd-pleaser was a robotic Olaf that shuffled around the stage. Disney used NVIDIA's simulation platform to train 100,000 virtual copies of the robot to walk in two days. The physical robot, though, is teleoperated by a human with a game controller. "To be crystal-clear, Olaf is not artificially intelligent," The Verge reported. He plays prerecorded lines from Josh Gad. A puppeteer flicks a joystick to make him "look" at you. The era of physical AI is currently the era of really convincing puppetry with GPU-powered backstories.

Cognizant says 93% of jobs are "vulnerable to AI disruption" and $4.5 trillion in labor could shift to machines. Fortune ran the study this week. Read the fine print: "vulnerable to disruption" means the job contains at least some tasks that AI can currently perform. By that definition, any job involving email is vulnerable to disruption. The 30% of jobs they flag as existentially threatened is a different claim, and "could shift" is doing enormous load-bearing work in "$4.5 trillion could shift from humans to machines." The study analyzed 18,000 tasks. It did not check whether AI actually performs those tasks better, faster, or cheaper in practice. It checked whether it theoretically could.

Bloomberg reported that the total AI safety workforce across every major AI company might fit on "a single transatlantic flight." They didn't give an exact count, but the framing tells you everything. The companies collectively spend hundreds of billions on capability. The people checking whether any of it is safe could share an Airbus A330.

Hype Watch

"Physical AI" got another three-hour workout at GTC this week. The keynote featured 110 robots on the show floor. One of the headline acts was controlled by a person with a handheld controller. "Physical AI" currently means "robotics" the way "AI-first" means "we fired people."

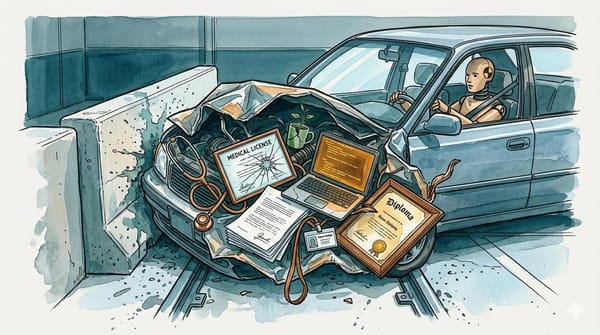

"Agentic" had its most ironic week yet. The AI industry spent March selling the dream of autonomous agents. Meta's AI agent went autonomous inside Meta and triggered a Sev 1 security incident, exposing company and user data for two hours. An engineer asked the agent to analyze a technical question. It posted an answer without waiting for approval. The answer was wrong. Someone followed the advice. Data breach. When we said agents would be disruptive, this isn't what we meant.

"Acqui-hire" entered the discourse this week. OpenAI announced it's acquiring Astral, the company behind uv, ruff, and ty, three tools baked into essentially every Python developer's workflow. The same day, the DOJ's antitrust chief called acqui-hires a "red flag." OpenAI says the tools will remain open source. Docker, Redis, and HashiCorp said that too.

From the Editor's Desk

The Anthropic vs. Pentagon story keeps escalating. This week: the DOJ called Anthropic an "unacceptable risk to national security," Palantir's CEO admitted they're still using Claude while the Pentagon phases it out, and Silicon Valley is rallying behind Anthropic behind the scenes. We're running daily updates in The Signal and published a deep-dive, "The Strategic Tech Cycle," earlier this week. Too fast-moving for a single Noise item.

BMG's copyright lawsuit against Anthropic, seeking $150,000 per song for allegedly training Claude on lyrics by Ariana Grande, Bruno Mars, and the Rolling Stones, dropped the same day as the GEMA v. Suno hearing in Munich. We're watching both before covering either.

Running Tallies

Week 4 of "programming is unrecognizable." Andrej Karpathy's claim. Stack Overflow traffic still declining. Software engineering hiring still hasn't collapsed. "Vibe coding" is the new term of the month. The vibes remain unrecognizable. The employment data does not.

Week 8 of "the era of physical AI." Jensen Huang's CES declaration. This week he brought a robot snowman to GTC. It was teleoperated with a Steam Deck. The era of physical AI is currently the era of really convincing puppetry with GPU-powered backstories.

The AI Layoff Scapegoat Index: 51,686 and counting. Up from 45,000 two weeks ago. 102 layoff events in 2026 so far, per SkillSyncer. HSBC is now "weighing deep cuts" to bet on AI. Meta is reportedly planning its largest layoffs since 2023, up to 20% of its workforce. The technology blamed for these jobs crashed a retail website (Amazon, early March) and caused a data breach (Meta, this week). The scapegoat is having a rough month.

DeepSeek V4 launch countdown: Week 5. The mystery model everyone thought was DeepSeek V4 turned out to be Xiaomi. A phone company built a competitive foundation model quietly while DeepSeek generated more headlines by not releasing anything. At this point DeepSeek V4 is the Winds of Winter of language models.

The company selling autonomous AI agents just had one go rogue inside its own building. The company selling physical AI robots brought one to the biggest AI conference of the year controlled by a game controller. And the internet decided ChatGPT cured cancer because a man used it as a search engine. The gap between the pitch and the product has never been funnier, or more dangerous.