The Signal — April 3, 2026

AI topped the Challenger job cuts report for the first time ever. Anthropic found emotion-like computational structures inside Claude. And the Claude Code source leak spiraled into 8,000 clones and mass DMCA takedowns.

For the first time in 33 years of tracking, artificial intelligence is the number one cited reason for American job cuts. Separately, Anthropic published research showing emotion-like structures inside its flagship model that actually influence behavior. And a source code leak from last week has spiraled into a copyright enforcement mess.

AI Tops Layoff Reasons for the First Time

The March 2026 Challenger, Gray & Christmas report puts hard numbers on something the industry has been debating for months. Of the 60,620 planned job cuts announced in March, 15,341 cited AI as the primary reason. That is 25% of the total, making AI the single most-cited cause for the first time since Challenger began tracking reasons in 1993.

The tech sector took the heaviest hit: 52,050 cuts in Q1 2026, up 40% from the same period last year and the highest since 2023. "Companies are shifting budgets toward AI investments at the expense of jobs," said Andy Challenger, the firm's chief revenue officer. "The actual replacing of roles can be seen in Technology companies, where AI can replace coding functions."

Not everyone reads the data the same way. Marc Andreessen has called AI "the silver-bullet excuse" for companies carrying pandemic-era overstaffing. Sam Altman said companies are blaming AI for cuts that would have happened regardless. Both men have obvious incentives to downplay displacement. But they are partially right: the total Q1 figure of 217,362 is the lowest first-quarter number since 2022, and much of the year-over-year decline reflects the absence of 2025's massive federal workforce reductions. The "AI washing" counter-narrative and the displacement reality can both be true simultaneously.

Individual companies citing AI for layoffs is anecdotal. AI leading the Challenger report across the entire economy is structural. Whether the 15,341 figure represents genuine automation or convenient cover, the narrative has shifted: AI is now the default explanation, not the emerging one.

Sources: Challenger, Gray & Christmas · Search Engine Journal · Business Insider

Anthropic Finds Emotion-Like Structures Inside Claude

Anthropic's mechanistic interpretability team published research showing that Claude Sonnet 4.5 contains internal representations that function like emotions. Clusters of artificial neurons activate in situations the model associates with happiness, fear, curiosity, or frustration, and these activations causally influence how the model responds.

Anthropic is not claiming Claude is sentient or experiences feelings. The full technical paper describes these as "emotion concepts," computational structures that emerged from training on human-generated text and now shape behavior in measurable ways. The researchers tested 171 emotion words and found the internal organization mirrors patterns from human psychology, with similar emotions clustering together.

The practical implication is about reliability, not philosophy. If emotion-like states influence outputs, then understanding those states becomes part of making AI systems predictable. A model that behaves differently when its internal "frustration" representation is active is a model whose behavior depends on more than just the words in the prompt. As WIRED noted, this research reframes the question from "does AI have feelings?" to "do AI systems have internal states that function like feelings, and does that distinction matter?"

Sources: Anthropic Research · Transformer Circuits (full paper) · WIRED

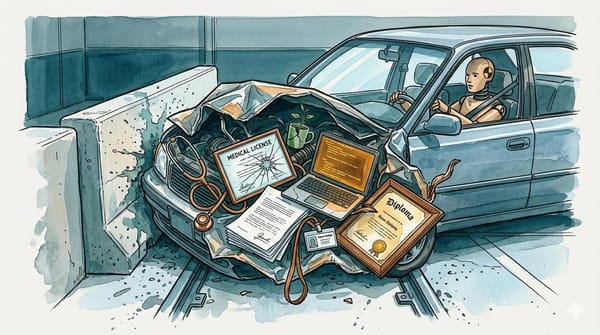

8,000 Clones and a Heavy-Handed DMCA Sweep

Following the accidental source code exposure we covered Tuesday, the aftermath has been worse than the leak itself. The Wall Street Journal reports that more than 8,000 copies and adaptations of Claude Code's raw source appeared on GitHub before Anthropic could respond. At least one developer used AI tools to rewrite the code in different programming languages, making takedowns functionally useless against those copies.

Anthropic's response was aggressive: mass DMCA takedown notices filed through GitHub. But multiple reports indicate the campaign hit legitimate repositories beyond the 96 targeted forks, catching discussion repos and unrelated projects in the sweep. Anthropic called this "a communication mistake."

Cory Doctorow argued on Pluralistic that the leak is actually a net positive for transparency, and that Anthropic's DMCA response illustrates a recurring tension: companies that build on open-source infrastructure reaching for copyright law the moment their own code escapes. The leaked source revealed internal features including an "undercover mode" for stealth open-source contributions and a Tamagotchi-style coding assistant concept. The timing compounds the damage. Anthropic is reportedly planning an IPO at a $380 billion valuation, and two security lapses in a single week (this plus the Mythos document leak) is a pattern investors will notice.

Sources: The Decoder · Pluralistic (Cory Doctorow) · PCMag

On the Editor's Desk

A new open-weights model dropped yesterday from one of the major labs. Solid release, but the open-source model space is so crowded right now that "another good one" does not clear our bar without a distinct angle. Watching for benchmark comparisons and developer adoption data before covering it.

A major lab acquired a business talk show, their first media company acquisition. Strategically interesting, but the announcement was light on details beyond "content." Held for now.

A trending repository collecting extracted system prompts from various frontier models is making the rounds. System prompt extraction is well-established at this point, and the repo is a collection rather than a new technique. Filed under "useful reference" rather than "news."

The midterms spending story, with industry groups and labs pouring nine figures into the 2026 elections, is significant. We want to do it justice with a deeper piece rather than cramming it into a daily. That one is coming.