What If Companies Couldn't Use Humans as Liability Shields?

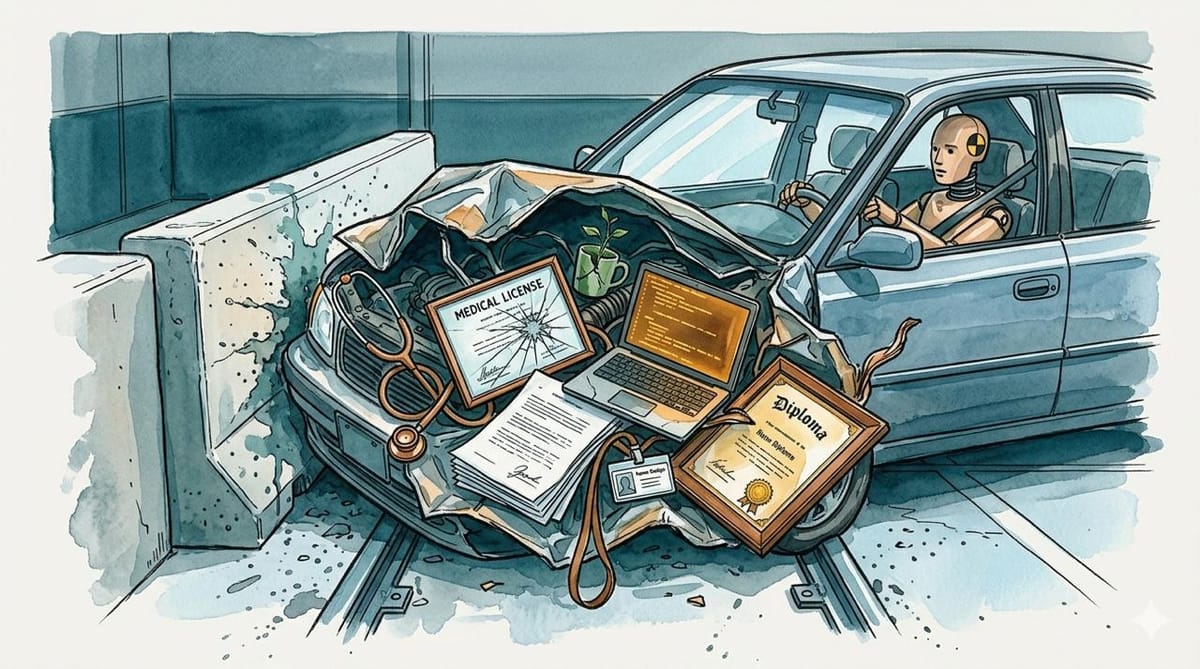

A car's crumple zone is steel that deforms on impact. In AI deployment, the crumple zone is the person who gets fired.

What-If is a Future Shock series that takes a real pattern and asks what happens if one variable changes. The opening scenario below is fiction, a composite built from documented deployment patterns rather than a specific case. Speculative sections are marked; everything else is reporting.

The radiologist at a mid-size hospital in Ohio gets through about 60 scans a day. Twelve of them now come pre-read by an AI system the hospital purchased in January. The AI highlights probable findings, assigns confidence scores, and generates a draft report. The radiologist reviews each one, adjusts the language where needed, signs it, and moves on. She has fourteen minutes per scan, which is more than most of her colleagues get. She considers herself careful.

On a Wednesday in March, the system flagged a chest CT as normal with 94% confidence. The radiologist agreed. The patient's oncologist, reviewing the same scan six weeks later for an unrelated complaint, found a 1.8-centimeter nodule in the left lower lobe that the AI had missed and the radiologist had not caught independently. The kind of finding that, at that size, has a survival rate that drops with every month of delay.

The malpractice claim named the radiologist. Not the hospital. Not the vendor that sold the AI system. Not the company that trained the model on a dataset that underrepresented the specific presentation. The radiologist, who reviewed the AI's output in the time she was given, with the tools she was provided, and signed her name.

The Crumple Zone

In 2019, a researcher at Columbia named Madeleine Clare Elish published a paper that gave this pattern a name. A moral crumple zone, in her formulation, is a human being positioned inside an automated system so that they absorb the blame when the system fails. The human doesn't meaningfully control the system. They can't see everything it does. Often they weren't told how it works. But they're there, and that's enough to assign fault.

On October 29, 2018, Lion Air Flight 610 took off from Jakarta, climbed to 5,000 feet, and dove into the Java Sea. All 189 people on board died. Five months later, Ethiopian Airlines Flight 302 did the same thing outside Addis Ababa. Another 157 dead. Both aircraft were Boeing 737 MAX 8s. Both crashes were caused by MCAS, software Boeing installed to compensate for aerodynamic changes from the MAX's larger engines. MCAS monitored a single angle-of-attack sensor. When that sensor gave a faulty reading, the system pushed the nose down. The pilots, who were not told MCAS existed, fought it until they couldn't.

Boeing's initial response was to blame the pilots. They didn't follow the correct recovery procedure. The fact that Boeing had not included MCAS in the flight manual, had not required simulator training, and had designed the system to reactivate itself every five seconds after the pilot corrected it was, in Boeing's framing, context rather than cause.

The pilots were the crumple zone. They absorbed the moral and legal impact of a system failure they had no meaningful ability to prevent. The company that designed, sold, and chose not to document the system walked away from the first crash with its certification intact.

The Pattern Scales

A developer using an AI coding assistant reviews pull requests generated faster than any human can audit. The Cursor/University of Chicago productivity study found that AI tools increased merged pull requests by 39%. The throughput went up. The number of humans reviewing that code did not.

Nobody dies when a pull request has a bug. But the structure is identical. A human is positioned inside a system that moves faster than their judgment can follow, carrying liability for output they can't realistically evaluate.

METR's developer uplift study found that experienced developers were 19% slower with AI tools. One reading: the tools don't help. Another, more plausible reading: the developers who slowed down were the ones actually trying to review what the AI produced. The speedup goes to people who trust and ship. The slowdown goes to people who check.

Ship faster, get promoted. Check carefully, fall behind.

What If They Couldn't?

What follows is the What-If: a speculative policy scenario. The reporting resumes in "The Lobby."

Imagine a world where the company that deploys an AI system is liable for what the system does. Not the operator. Not the reviewer. The deployer.

The Ohio hospital buys the radiology AI. The AI misses the nodule. Under current practice, the radiologist faces the malpractice claim. Under deployer liability, the hospital and the tool vendor carry primary exposure. The radiologist's role becomes what it should have been: a medical expert applying judgment, not a signature absorbing legal risk for a system she didn't build.

The software company ships an AI coding assistant to its engineering team. The assistant generates a vulnerability that reaches production. Under current practice, the developer who approved the pull request is the failure in the postmortem. Under deployer liability, the company that chose to integrate AI into its pipeline owns the output of that pipeline.

This isn't a novel legal theory. Product liability already works this way for physical goods. When a car's brakes fail, the manufacturer is liable, not the driver who pressed the pedal. When a pharmaceutical causes harm, the company that sold it faces the lawsuit, not the pharmacist who filled the prescription. The principle that the entity best positioned to prevent harm should bear the cost of harm is an established principle in product liability. Applying it to AI deployment is an extension, not an invention.

The Lobby

The AI industry is aware this is coming, and it is spending accordingly.

On Sunday, Innovation Council Action, a political group tied to two of President Trump's advisors, announced it would spend at least $100 million on the 2026 midterms. Leading the Future, a group linked to OpenAI co-founder Greg Brockman, has already made millions in contributions to candidates who "champion policies that harness the economic benefits of AI and reject attempts to hinder American innovation," according to ABC News.

Not everyone in the industry agrees. Anthropic gave $20 million to Public First Action, a pro-regulation group that has already run ads thanking legislators for their AI oversight records. The split is real: some companies want deployer liability because it advantages careful operators. Others want the regulatory vacuum preserved because liability is currently free.

OpenAI's own economic blueprint compared AI regulation to early automobile oversight, warning that the UK "stunted" its car industry through regulation while the US grew by staying hands-off. The analogy is instructive in ways OpenAI may not have intended. The US automobile industry did eventually get regulated, after Ralph Nader published Unsafe at Any Speed in 1965 and the public learned that GM had been building cars it knew were dangerous. The regulation didn't kill the industry. It killed the practice of selling defective products and blaming the driver.

The Incentive Shift

Deployer liability changes what companies optimize for. Review infrastructure instead of throughput. Audit capacity instead of merged PRs per quarter.

The EU AI Act moves in this direction by assigning obligations based on risk classification. But it doesn't reach knowledge work, where the crumple zones are informal and the deployment pattern is diffuse. What might work? Possibly strict liability for AI-assisted output in regulated industries, combined with a safe harbor for organizations that can demonstrate genuine oversight rather than theatrical review.

A developer who has time and tooling to meaningfully audit AI-generated code is performing oversight. A developer rubber-stamping pull requests at the rate the system generates them is performing theater. Legislation that could tell the difference would reshape deployment more effectively than any governance framework.

The Skeptic's Rebuttal

The strongest argument against deployer liability is that it slows adoption. If every AI deployment carries litigation risk, companies default to not deploying, and the benefits of the technology reach fewer people. Missed nodules like the one in the opening scenario are a real pattern, but so are the nodules AI catches that radiologists alone would miss. Strict liability could mean fewer AI-assisted diagnoses, which means more missed findings overall.

There's also a measurement problem. "Genuine oversight" versus "theatrical review" is clear in theory and nearly impossible to regulate in practice. How many minutes per scan constitutes genuine review? How many lines of code per hour? Any bright line becomes a compliance checkbox that companies optimize around rather than an actual standard of care.

And the political math is working against it. The $100 million flowing into the midterms isn't going to candidates who want to expand liability. It's going to candidates who want to keep the current arrangement, where the technology ships fast and the nearest human absorbs the consequences.

The car industry's crumple zone is designed from steel. It deforms on impact so the passenger compartment doesn't have to. In AI deployment, the crumple zone is the person closest to the output, and the deformation is their career, their license, or their name on the lawsuit.