The AI Automation Problem Is Mostly Not AI

Most companies do not need AI agents first. They need clean intake, permissions, handoffs, receipts, escalation, and workflow ownership.

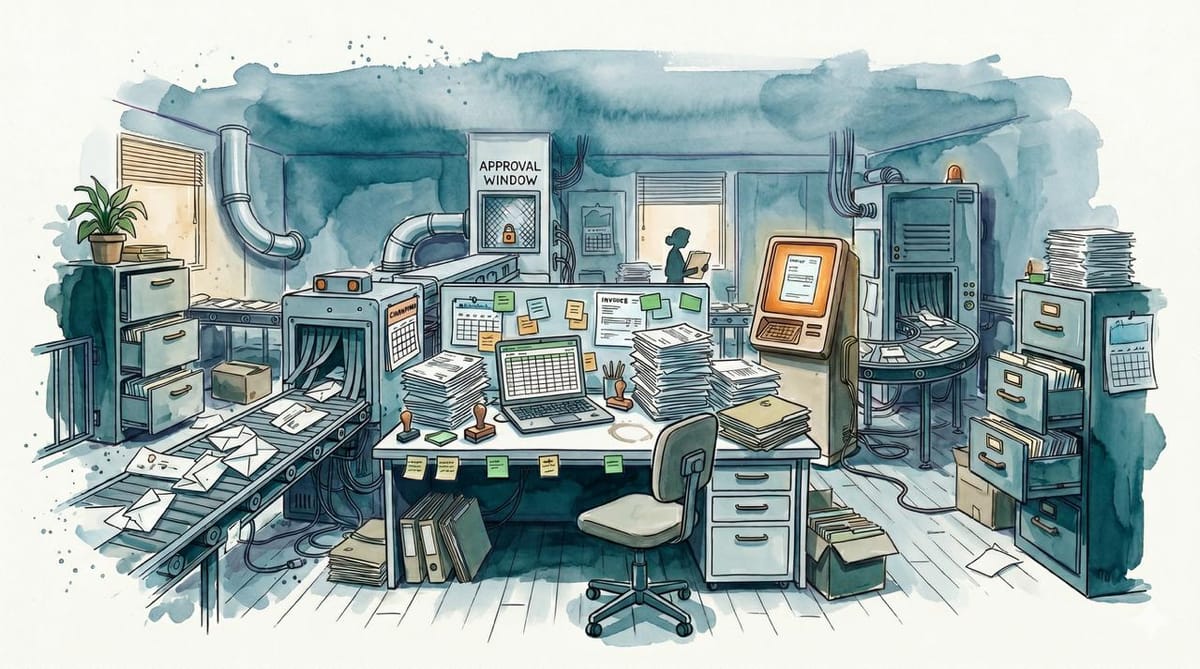

Somewhere in a company you have heard of, there is a spreadsheet called "new-final-v3." It receives invoices while one person knows which column matters, and that person is on vacation. The invoices keep arriving anyway.

This is the environment into which an executive has requested an AI agent.

A self-described automation agency posted on Reddit about shipping more than 30 projects this year. The poster's claimed lesson was the least fashionable one available: many of the projects should not have been automations at all. Clients arrived asking for agents and custom workflow systems. Under the hood, the agency kept finding messy inboxes, half-used CRMs, and one employee who knew the secret version of the process. The hard part was not the model. The hard part was discovering that the workflow lived in someone's head, changed depending on who was working that day, or had no shared definition of what "done" meant.

At that point, automation does not solve the problem. It moves the mess faster.

The Gap Between the Conference Room and Monday

IDC research cited by CIO found that 88% of AI proofs of concept fail to reach widescale deployment. The diagnosis was not "models are bad." It was organizational readiness: unclear ROI, insufficient AI-ready data, immature processes, infrastructure gaps, weak data operations, and a lack of internal expertise. MIT's NANDA initiative, summarized by Fortune/Yahoo Finance, reached a similar place from a different angle: only about 5% of enterprise generative AI pilots achieved rapid revenue acceleration. The report called the gap a learning problem. Enterprise tools were not adapting to company workflows or improving over time.

A demo asks whether a model can perform a task once. A deployment asks whether the organization can absorb the task into a repeatable process. Those are different tests.

A chatbot can read one invoice and identify the vendor. An accounts-payable workflow needs to know which inbox receives invoices, how to match them against purchase orders, what happens when the PO is missing, who approves exceptions, how duplicates are detected, where the audit record lives, and what the system does when the model is unsure. The impressive part is the extraction. The valuable part is the machinery around it.

The model performs the visible trick. The rest of the company still has to do operations.

What the System Actually Needs

The missing layer does not demo well. Nobody raises a funding round by announcing a clean intake form and a reliable escalation path. But most real automation depends on exactly that kind of infrastructure, and the infrastructure is where projects actually die.

Intake. Work has to enter the system in a known place, in a known format, with enough information to act on. A customer request that can arrive through email, Slack, voicemail, a sales rep's memory, or a spreadsheet named "new-final-v3" is not ready for automation. It is ready for anthropology.

Permissions. An AI system that can draft a refund email is different from one that can issue the refund. A tool that can summarize a medical note is different from one that can update the record. Every workflow needs an answer to who can read, who can write, who can approve, who can reverse, and who audits each action. Without that, the automation is either too weak to matter or too dangerous to trust.

Handoffs. Most work changes owner: sales to onboarding, support to engineering, a junior employee to a manager, a model to a human reviewer. The handoff is where context gets lost, deadlines disappear, and everyone assumes someone else owns the next step. If the handoff is informal when humans do it, automation will not make it formal by magic.

Deterministic logic. Some work should not touch a language model at all. Check whether a required field exists, route invoices under a threshold to one queue and invoices over it to another, block the workflow when the customer ID is missing, retry a failed API call, and write the log. None of that needs a model. Putting a language model in charge of a rule you already know is buying uncertainty.

The model itself. It belongs where language or ambiguity is the bottleneck: reading messy text, turning unstructured requests into structured fields, drafting a response for review, clustering support tickets, summarizing the context a human needs before making a decision. Even there, it needs receipts. What did it read, what did it decide, how confident was it, what changed downstream. If nobody can reconstruct the path, nobody can trust the output when something breaks.

Escalation. Every automated workflow needs a way to stop being automated. Low confidence, missing data, angry customer, billing change, medical ambiguity, legal risk, repeated retries. These are not edge cases to discover in production. They are design requirements. The handoff to a person should include the evidence, the attempted action, the failure reason, and the next decision needed. Otherwise the human is not supervising the system. They are cleaning up after it.

The Objection That Matters

There is a serious counterargument: AI can help impose the structure. A company with messy processes does not have to map everything by hand. An LLM can interview employees, summarize ticket histories, cluster recurring requests, extract common decision points, draft the first version of a workflow map. For companies that have never documented how work actually moves, that is genuinely useful.

The objection is correct, but it describes a different job. Using AI to discover the process is not the same as handing the process to an agent. The output still needs a human owner who can say: yes, this is how the work should happen; no, that exception needs approval; yes, this field is required before the next step runs. AI can produce the first map. It cannot be the institution that approves the map.

Similarly, some workflows really are language-first. Customer support, contract review, sales qualification, medical documentation, recruiting, insurance claims. A deterministic workflow cannot handle all of that with dropdowns and if-statements. Waiting until every input is clean may mean waiting forever.

But language-first does not mean agent-first. The model sits at the uncertain part of the workflow, with boring systems around it. It reads the ticket and proposes a category while deterministic logic checks whether that category requires approval. The system records the decision, routes the exception, and shows the human exactly what the model saw. The model handles ambiguity; the workflow handles accountability.

That division sounds less exciting than "autonomous agents will run the back office." It also has a better chance of surviving contact with the back office.

The Sequence

The Reddit poster's advice was almost offensively practical: pick one workflow, write every step down, track where the data comes from and where it goes, note every decision point, run it manually long enough to see the pattern clearly. In the weakest projects, the recommendation was not "let's automate it." It was to run the process manually for a few weeks, document the actual work, clean up the edge cases, then come back.

That advice will not satisfy an executive who wants an AI strategy slide by Friday, but it is still the correct course of action.

The least exciting artifact in the room is usually the valuable one: a workflow document clear enough that a new employee could run the process without asking who knows the secret version. Once that exists, AI has somewhere useful to stand. Before that, it is just another fast thing pointed at a mess.