The K-Curve

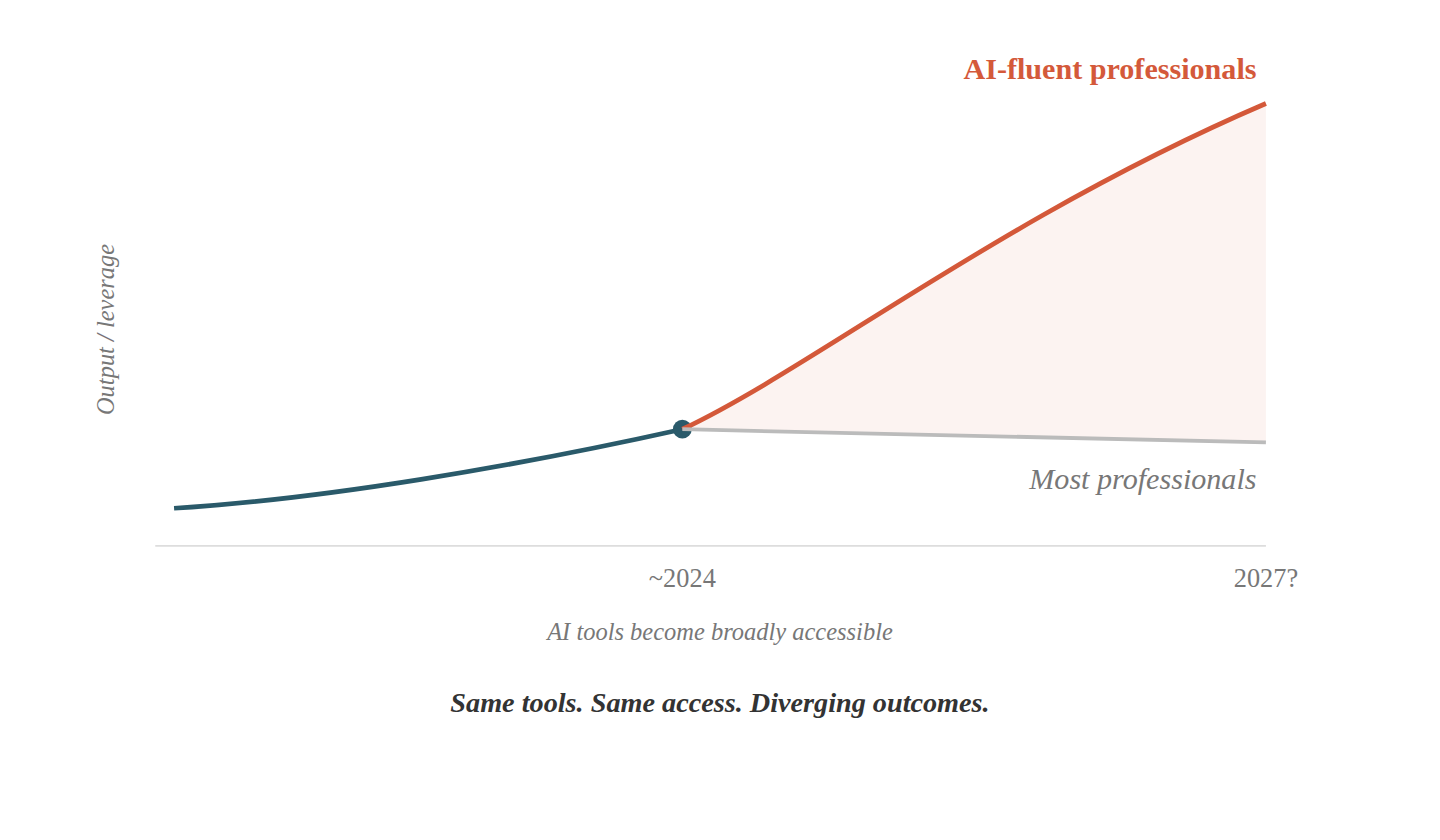

The gap between AI power users and everyone else is widening. The interesting question is when the gap stops being a personal choice and starts being a structural requirement.

This is Part 3 of a three-part series on the future of AI adoption. Part 1: "What If Exposure Breeds Exhaustion?" · Part 2: "What If AI Is Just Bad at Most Things?"

Gallup published its April 2026 workforce survey and the headline number was optimistic: 50% of U.S. workers now use AI in some form. Look one layer deeper and the shape changes, because only 13% use it daily and 28% use it weekly or more, while the other half has never touched it at work. Among those who have, a Larridin analysis of enterprise productivity data found a sixfold gap between power users and average users of the same tools. The divergence is measurable, widening, and shaped like a letter.

Parts 1 and 2 of this series established two claims, that exposure breeds exhaustion rather than enthusiasm and that the tools are mediocre at most things people actually need done. If both are true, then the split in Gallup's data isn't a temporary phase of uneven adoption but something closer to an equilibrium, where the people getting value keep getting more of it while the people who tried and bounced stay bounced. That's the K.

The Smartphone Mirror

Pew Research tracked smartphone ownership from the beginning, and 35% of U.S. adults had one by 2011, four years after the iPhone launched. The majority didn't have one and weren't suffering for it. You could still get directions from a gas station attendant, deposit checks at a teller window, buy concert tickets at a box office counter. The world accommodated both paths without requiring anyone to choose.

Part 2 used the smartphone parallel to illustrate effort-to-value ratios, but this section is about what happened next, when the gap stopped being a personal preference and became an infrastructure problem. Banks closed branches, airlines stopped printing boarding passes at counters, and two-factor authentication started requiring a device in your pocket. The gap went from "I prefer not to" to "I can't access my own bank account."

The pivot wasn't adoption reaching 50% or 70% or 90%. It was when builders started optimizing exclusively for adopters and stopped maintaining the alternative path, an infrastructure decision made by someone else on a timeline that had nothing to do with whether the holdouts were ready.

The Permission to Wait

There is a position in the AI discourse that almost nobody occupies, because nobody has a financial incentive to hold it. Industry needs adoption now because that's revenue, critics need fear now because that's engagement, and investors need adoption numbers to justify valuations built on growth curves that assume universal penetration within a decade. The person with no financial stake in the outcome, the one who might honestly tell a nurse or a teacher or a plumber that AI will probably be great in 2030 and is mediocre right now and they're fine either way, does not exist in the current conversation. That person doesn't have a newsletter to sell or a funding round to close.

The St. Louis Fed reported that generative AI accounts for 5.7% of total work hours even among users, a number that describes a feature rather than a revolution. The Stanford HAI 2026 AI Index found a 50-point gap between expert optimism and public sentiment about AI's trajectory, and both readings are rational given what each group has actually experienced: the experts who build and study these systems see transformation coming, while the public that uses them daily remains less convinced.

Rogers' diffusion curve exists for a reason that has nothing to do with intelligence or sophistication. Early adopters aren't early because they're smarter; they're early because the technology solves a problem they already recognized, in a context where they have the autonomy to act on it. The freelance copywriter using Claude for client proposals saves three hours, keeps those three hours, bills them elsewhere. The ER nurse who might benefit more from AI-assisted triage has a full shift regardless of how efficiently any single task gets done, which means the technology doesn't reach the person who needs it most so much as the person with the most slack to explore it.

That pattern is the adoption inversion: bandwidth predicts adoption, not need. The sectors that would benefit most from AI, healthcare and education and public services and elder care, are the most overworked and most heavily regulated, while the sectors adopting fastest, solo knowledge workers and freelancers with direct control over their own workflows, have autonomy and own their own return on investment. The non-adopters aren't failing; the system is structured so that the person with the problem doesn't have the authority, and the person with the authority doesn't have the problem.

When the Ground Shifts

The smartphone story didn't end with "and everyone was fine not having one," and the question for AI isn't whether the gap closes but when the ground shifts underneath the people who are currently fine standing still.

The first signal is adoption by liability. Epic's ambient AI scribing reached 62% of U.S. hospitals by June 2025, less than eighteen months after launch, and at some point a hospital without AI-assisted documentation isn't "choosing a different approach" so much as providing a demonstrably lower standard of record-keeping. The HITECH Act didn't ask hospitals whether they wanted electronic health records; it made not having them a compliance risk, and the transition happened in years rather than decades. The same dynamic is forming around AI deployment, where not using a tool eventually becomes professionally negligent and the question of personal choice evaporates.

The second signal is the hiring filter. PwC data shows a 56% wage premium for workers with AI skills, doubled from 25% in twelve months. When job postings require AI proficiency the way they currently require spreadsheet proficiency, the gap stops being about productivity preferences and starts being about access to employment. That transition is already underway in software engineering and content production, though it hasn't reached nursing or plumbing or K-12 education or social work, and whether it does depends on how long the tools stay mediocre at those jobs.

The third signal is what economists call the Cantillon effect: when new capabilities arrive, the people closest to the source extract value before the market adjusts prices. Developers with early API access ship products while consumer-tier users wait for the price drop, and open-source practitioners fine-tune models months before enterprise SaaS packages the same capability behind a subscription. Equal access at consumer prices doesn't close the gap if the advantage walked through the door six months earlier, because the returns to being first are disproportionate to the effort of being first, and that asymmetry compounds over time even after the tools themselves become widely available.

Nobody knows when the tipping point arrives. The smartphone took roughly 2010 to 2015 to go from "nice to have" to "required for modern life," and AI's timeline might compress or the tools might stay unreliable enough that the tipping point is further out than the industry's fundraising decks suggest. Either way, the shift happens when infrastructure stops accommodating non-adopters, not when adoption crosses some arbitrary percentage threshold.

The Honest Objection

"Permission to wait" assumes you have a choice. Ramp Economics Lab tracked the substitution in real time: company spending on freelance marketplaces fell from 0.66% to 0.14% of total spend between 2021 and 2025, while AI model spending rose from zero to nearly 3%. More than half the businesses using freelancers in 2022 have stopped entirely. Those freelancers didn't choose to wait, and the mid-career specialist who lost a job to a candidate two years junior who opened an AI coding assistant in the interview didn't choose to wait either. For specific people in specific roles, the tipping point already arrived without warning and without the permission they were never asked to grant.

The IMF's Working Paper 25/68 complicates the picture in a direction most AI commentary ignores. AI appears to reduce wage inequality, because it hits high-income cognitive jobs first and hardest, while increasing wealth inequality, because capital owners capture the surplus from automation regardless of which jobs get displaced. The K-curve for workers might flatten while the K-curve for owners steepens, and the gap this series has been tracking might not be the one that matters most in the long run.

This objection is genuine and it limits the "permission to wait" argument in ways that matter. The macro story is patience, where most people are fine and the tools will mature and the gap closes as infrastructure delivers AI rather than requiring people to discover it on their own time. The micro story is displacement, where specific people in specific roles are already experiencing the tipping point and patience is a luxury they weren't offered. Both are true simultaneously, and the distance between them is the actual shape of the K.

The Window

The three-part argument this series has made is simple, taken whole. Exposure breeds exhaustion rather than enthusiasm. The tools are mediocre at most things. And the gap between the people getting value and everyone else is real but not yet structural for the majority. All three of those statements are true in May 2026, and all three have expiration dates that nobody can predict with confidence.

What we're watching for isn't an adoption percentage but the infrastructure decisions, like the first major employer that makes AI proficiency a baseline hiring requirement across non-technical roles, the first insurance company that adjusts malpractice premiums based on whether a practice uses AI-assisted diagnostics, the first bank that eliminates the non-app path for a core service. Those decisions get made in boardrooms and regulatory bodies, not by individual workers choosing whether to open ChatGPT before their morning coffee, and when enough of them accumulate the ground shifts regardless of whether the tools have improved enough to deserve it.

Until then, the permission to wait is real, not because the technology is fake or because the gap doesn't exist, but because the gap is still a personal choice for most people and personal choices deserve respect. The moment it stops being a choice is the moment this series stops being reassuring and starts being a warning, and we haven't crossed that line yet.

This concludes the three-part series on the future of AI adoption. Part 1: "What If Exposure Breeds Exhaustion?" · Part 2: "What If AI Is Just Bad at Most Things?"