The Young Lady's Illustrated Primer: When Will AI Actually Teach Our Kids?

A three-tier prediction on AI tutoring, from classroom copilot to Nell's autonomous mentor

What Is The Primer?

In 1995, Neal Stephenson published The Diamond Age, a novel set in a nanotech-saturated future where nation-states have been replaced by cultural enclaves called phyles. The book's centerpiece is the Young Lady's Illustrated Primer: an AI-powered book designed to educate a single child from toddlerhood through adolescence.

The Primer falls into the hands of Nell, a girl growing up neglected in dangerous circumstances. No functioning school. No reliable adults. The book becomes her teacher, her mentor, and, functionally, her parent.

What makes the Primer remarkable as a piece of speculative design is its architecture. The system generates personalized narrative in real time, adapting to Nell's reading level, emotional state, and developmental stage. It doesn't teach through drills or flashcards. It teaches through story. Nell works through an interactive fairy tale where the puzzles she encounters map to whatever she needs to learn next: reading, mathematics, logic, ethics, social reasoning, self-defense. The curriculum is total. The delivery is invisible.

But there's a critical layer Stephenson built into the system. The AI writes the scripts. A human performs them. The Primer's voice belongs to Miranda, a "ractor" (Stephenson's term for a remote actor) who reads the AI-generated text aloud, providing vocal warmth, emotional nuance, and timing that the AI cannot replicate on its own. Miranda doesn't choose what Nell learns. She doesn't design the curriculum. She is, in modern terms, the voice synthesis layer with a pulse.

Stephenson then runs the control experiment inside the novel itself. When copies of the Primer are mass-produced and distributed without human ractors, the outcomes are measurably worse. The AI-only version still teaches. But it doesn't connect. Stephenson's argument, made thirty years before the current AI tutoring boom, is that the human voice layer is not decorative. It is load-bearing.

In the same Atlantic interview, Stephenson described his inspiration as a baby mobile: the kind that hangs over a crib with shapes that swap out as the infant develops. "What if you extended that idea to every other form of intellectual growth?" The Primer is that thought experiment carried to its logical extreme.

In a 2024 interview with The Atlantic, Stephenson expressed skepticism about current large language models as educational tools. "A chatbot is not an oracle," he said. "It's a statistics engine that creates sentences that sound accurate." The man who imagined the Primer does not think we've built it yet.

Bloom's Two Sigma Problem

To understand why the Primer matters as a prediction, you need to understand the problem it solves.

In 1984, educational psychologist Benjamin Bloom published a study with a finding so stark it acquired its own name: the Two Sigma Problem. Bloom and his graduate students compared three groups of learners. The first received conventional classroom instruction. The second got conventional instruction supplemented with regular testing and feedback. The third received one-on-one tutoring from a human expert.

The tutored students performed two standard deviations above the classroom-only group. In practical terms, the average tutored student outperformed 98% of the classroom-taught students. The effect was enormous.

Bloom framed this as a problem rather than a triumph because of the obvious follow-up: nobody can afford to give every child a private tutor. The finding became education's most persistent frustration. Decades of research confirmed that personalized attention works better than anything else. Decades of economics confirmed that it doesn't scale. Smaller class sizes help. Better-trained teachers help. Mastery-based learning helps. None of them close the gap.

The Two Sigma Problem has driven forty years of EdTech investment, from intelligent tutoring systems in the 1990s to adaptive learning platforms in the 2010s. Every generation of technology promises to deliver personalized instruction at classroom prices. None have.

But the foundation may be shakier than it looks. In November 2025, Education Next published a skeptical reassessment questioning whether Bloom's two-sigma effect was even real. The original study was small, the methodology had gaps, and replication attempts have produced mixed results. Some researchers now argue the true effect of one-on-one tutoring is closer to 0.5-1.0 standard deviations. Still large. But perhaps not the miraculous gap the field has been chasing.

This debate matters for calibrating predictions. If the target is 2σ, AI tutoring has a long way to go. If the real benchmark is 1-1.5σ, we may be closer than we think.

Either way, the Primer is Stephenson's fictional answer to Bloom's problem. Build an AI that delivers personalized, adaptive, one-on-one instruction. Make it cheap enough to copy. Then give it to every child who needs it. The question is no longer whether this is desirable. It's whether the technology can actually do it.

Where We Are Now: The Precursors

The teaching problem is effectively solved. In June 2025, a Harvard randomized controlled trial compared AI tutoring against in-class active learning and found that the AI group outperformed with effect sizes between 0.73 and 1.3 standard deviations. Not against passive lectures, but against the kind of engaged instruction that education researchers already consider best practice. A UK-based RCT published six months later confirmed the results held in real classrooms, not just labs. If Bloom's benchmark is 2σ, AI tutoring is already halfway there. If the skeptics are right that the real benchmark is closer to 1σ, it may already be at the door.

The infrastructure is landing simultaneously. Khan Academy's Khanmigo has reached millions of learners doing Socratic tutoring with GPT-4 (MagicSchool AI reports over six million educator users on a related platform). Google baked education-specific reasoning into Gemini 2.5 through LearnLM. Brookings research published in February 2026 showed that generative AI eliminates the need for pre-authored question banks, collapsing the cost of building adaptive systems for new subjects. Duolingo and competitors like Speak are racing to replace human language tutors with conversational AI. These aren't isolated experiments. They're an industry converging on the same conclusion: AI can teach.

And voice synthesis from ElevenLabs and OpenAI is closing the gap on what Stephenson called the ractor's warmth. Whether it's closing fast enough is a separate question.

The Engagement Gap: Why This Is Harder Than It Looks

Everything described above addresses the teaching problem. Can AI deliver effective instruction? The answer, increasingly, is yes.

But Stephenson understood something the current AI tutoring industry has not yet confronted. The Primer doesn't just teach Nell. It captivates her. She chooses it over everything else available to her. She returns to it voluntarily, day after day, year after year. The Primer isn't competing with a classroom for her attention. It's competing with every other thing a child might do with her time.

In 2026, that competition includes TikTok, YouTube, Instagram, Roblox, Fortnite, and whatever comes next. Billions of dollars in engineering talent are optimized around a single objective: capturing and holding human attention. An AI tutor has to be more compelling than all of it. Not occasionally. Consistently. For years.

The history of educational technology is littered with products that solved the teaching problem and lost the engagement war. Gamified learning apps see usage drop after the novelty period ends. Educational games fail because children can smell instruction disguised as entertainment. Interactive textbooks were dead on arrival. The pattern is consistent: the technology works in controlled studies where someone makes kids use it. It collapses in the real world where kids choose what to do with their time.

Four specific gaps separate current AI tutors from the Primer, and none of them are easy to close.

Narrative pedagogy. Every AI tutor on the market today uses some variant of Socratic Q&A. Ask a question, get a guiding response, work through the problem. Nobody is teaching through adaptive interactive fiction. And it's worth being honest: there's no evidence yet that narrative-driven pedagogy works better than Socratic Q&A, or even as well. The Harvard RCT that anchors this piece used a Q&A tutor, not a storytelling one. The entire Primer concept rests on an untested assumption that wrapping curriculum in story improves engagement enough to offset the added complexity. It might. It might also turn out that Q&A is just the better tool and Stephenson wrote a beautiful idea that doesn't survive contact with real classrooms. The Primer generates an ongoing story that Nell lives inside, where the plot and the curriculum are fused. Building that requires not just pedagogical AI but generative storytelling that sustains a child's interest across months and years. That's a creative writing problem at industrial scale, and no one has attempted it seriously.

Emotional modeling. Great human tutors know when to push a struggling student, when to back off, when to crack a joke to break tension, when to raise the difficulty because the student is coasting. Developing this instinct takes years of practice. Current AI systems can detect some emotional signals from text and voice input. Translating those signals into appropriate pedagogical responses in real time, across thousands of different learner profiles, remains unsolved.

Longitudinal personalization. The Primer knows Nell for her entire childhood. It accumulates years of context about her strengths, weaknesses, fears, interests, and growth patterns. Current AI tutoring systems are session-based. They optimize a single interaction. Building a system that maintains and usefully applies years of learner history is an engineering and privacy challenge that no product has tackled at scale.

The attention economy itself. Even a perfect Primer exists inside a device ecosystem designed to pull attention away from it. The Primer in the novel is a physical book with no competing apps. A real-world Primer would live on the same tablet where TikTok is one swipe away.

Regulatory friction. Any AI system that maintains longitudinal profiles of children runs headfirst into COPPA in the US and equivalent child data protection laws elsewhere. FERPA governs student education records in schools that receive federal funding. These aren't theoretical obstacles. They add months or years to deployment timelines, particularly for the Classroom and Homeschool milestones that involve minors' learning data stored over extended periods.

There's a version of this that anyone who's listened to an audiobook already understands. A good voice actor can take a mediocre book and make it unforgettable. A bad one can ruin a masterpiece. Anyone who's driven eight hours across the country to visit family for some holiday they're not entirely sure was worth the drive knows this: the right voice pulls you into a different world and keeps you there. The wrong voice makes you switch to a podcast by hour two.

That's the ractor argument. Miranda's voice is what makes the Primer stick. And the modern parallel is already here: ElevenLabs and OpenAI are cloning voices with enough fidelity that the distance between a synthetic narrator and a human one is shrinking by the month. Voice actors are already licensing their performances to AI companies. The infrastructure for a modern ractor layer exists. The question is whether a cloned voice carries the same emotional weight as a live one, especially for a child who needs to feel seen, not just heard.

One speculative step further: what if the "voice" of your AI tutor were someone you already watch and trust? A YouTuber, a creator whose personality you're already attached to. A modern ractor model. It's an interesting concept, but the practical obstacles are real: licensing, quality control, and the uncanny valley of a familiar voice saying unfamiliar things.

The Three-Tier Prediction

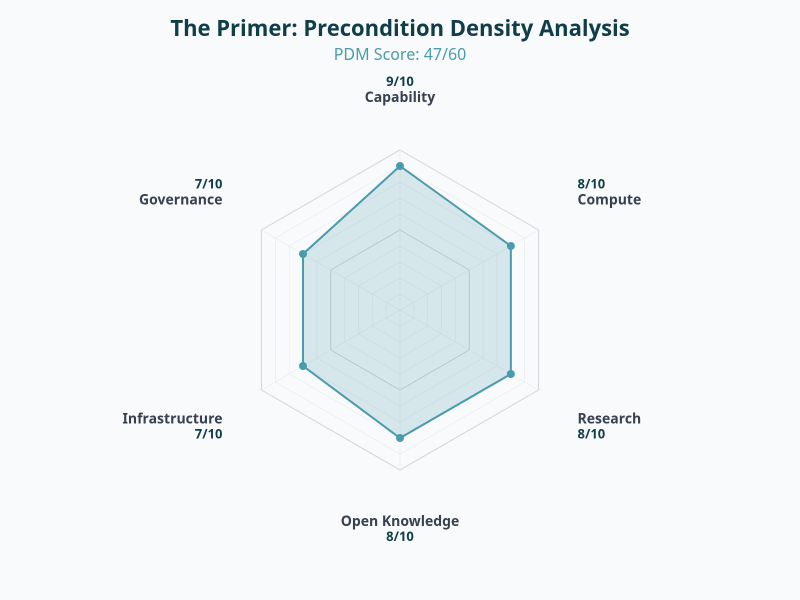

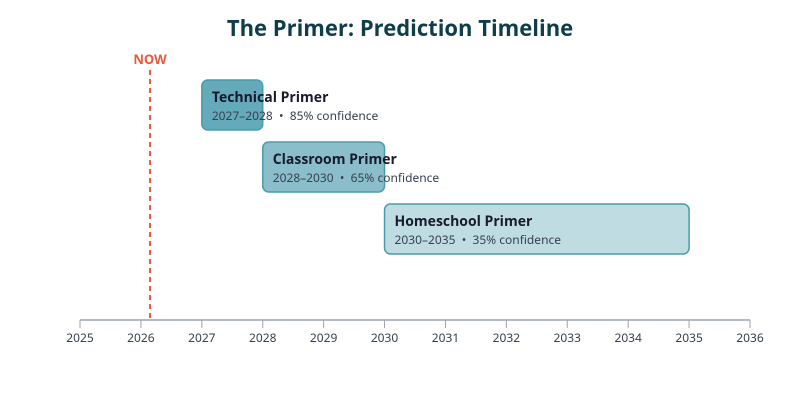

Rather than a single prediction, the Primer maps to three milestones of increasing difficulty. Each builds on the last, but they require different capabilities and face different obstacles. (The Primer was first flagged in our Sci-Fi Saturday predictions analysis with a PDM score of 47/60. This post is the full deep-dive.)

A note on how we define these milestones: traditional education research runs on peer-reviewed RCTs with multi-year timelines. A product shipped in 2027 wouldn't have journal-validated results until 2030 at the earliest, after IRB approval, a full academic year of data collection, and the publication cycle. By then we'd be on version five of the thing. These milestones are designed to track real-world adoption and observable outcomes, not academic publication schedules.

Milestone 1: The Technical Primer

Definition: An AI system that teaches a K-12 subject through adaptive narrative (not Socratic Q&A), commercially available or in public beta.

This is the proof of concept. Not "does it work at scale" or "do kids choose it voluntarily" but simply: does someone build it? Does a product exist that teaches through story rather than question-and-answer?

The pieces are all on the table. LLMs can generate coherent long-form narrative. They can teach effectively (the Harvard RCT proved that). They can adapt difficulty in real time. What nobody has done is assemble these capabilities into a product where the curriculum and the story are fused. The missing piece is a product insight, not a research breakthrough.

There's also a self-fulfilling element to this prediction. The concept of narrative-driven AI pedagogy is sitting in plain sight, described in detail by Stephenson thirty years ago, and the technology to build it exists today. The precondition density is high enough that articulating the gap could help close it. If an EdTech PM at Khan Academy or Google reads a clear description of what's missing, the time from insight to prototype could be measured in months, not years.

PDM Score: 47/60 (strong precursor density)

| Signal | Estimate | Confidence |

|---|---|---|

| PDM Model | 2027-2028 | 85% |

| Editor | Dec 2026 | 60% |

The editor's more aggressive timeline reflects a judgment that this is a product assembly problem, not a research problem, and that the motivation across the EdTech industry is high enough that the right framing could compress the timeline significantly.

Milestone 2: The Classroom Primer

Definition: A school district or education provider publicly deploys an AI tutoring system where teachers monitor AI-driven individualized instruction, with published student outcome data showing measurable improvement. District reports, internal studies, or preprints count.

This is the realistic version. Not "replace the teacher" but "restructure the classroom so the teacher's attention goes where it matters most."

The architecture: AI handles 95% of moment-to-moment instruction. Each student works through personalized content at their own pace. The teacher gets a dashboard showing which students need human attention, flagging confusion, frustration, disengagement, or mastery plateaus. The teacher intervenes for the things humans still do better than machines: emotional rescue, motivation, the irreplaceable "I see you" moment.

This maps to how the best classrooms already work. Good teachers circulate, check in with struggling students, push advanced students toward harder problems. The AI just makes it possible for one teacher to do this effectively with thirty students instead of five.

It also sidesteps the hardest engagement problems. You don't need the AI to solve emotional modeling perfectly if a human is watching for attention drops. You don't need the AI to compete with TikTok if the student is in a classroom with social expectations and a teacher's presence.

Bloom's Two Sigma reframed: AI instruction alone might get you to 1.0-1.5σ. A teacher monitoring the dashboard, intervening at the right moments, gets you the rest.

| Signal | Estimate | Confidence |

|---|---|---|

| PDM Model | 2028-2030 | 65% |

| Editor | Feb-Mar 2027 | 50% |

The editor's timeline assumes the Technical Primer triggers rapid school adoption. Districts are already deploying AI tutoring tools (Khanmigo is in classrooms now). The jump from "AI Q&A tutor with teacher oversight" to "AI narrative tutor with teacher oversight" is incremental once the product exists. The lower confidence reflects uncertainty about whether outcome data gets published quickly enough to count.

Milestone 3: The Homeschool Primer (Nell's Experience)

Definition: An AI tutoring system marketed to homeschool families demonstrates sustained voluntary usage (average 3+ sessions per week for 6+ months) across 100+ users, with self-reported or standardized test score improvement.

This is the full Diamond Age vision. Nell's Primer. No classroom, no teacher, no social scaffolding. Just the child and the AI.

The teaching part of this is likely solved by the time Milestone 2 lands. The hard part is everything else. Making a twelve-year-old choose this over TikTok, week after week, for six months. Maintaining engagement without a human in the loop to notice when attention drifts. Handling the emotional complexity of a child's life without a teacher who can read the room.

The Primer didn't just teach Nell algebra. It taught her ethics, resilience, how to read social situations, how to navigate a hostile world. That's closer to "AI forms a meaningful long-term relationship with a child," territory that is equal parts inspiring and unsettling.

The homeschool community is the natural early adopter market. Families already choosing to educate outside the classroom have the highest motivation to try an autonomous AI tutor and the fewest institutional barriers to adoption.

| Signal | Estimate | Confidence |

|---|---|---|

| PDM Model | 2030-2035 | 35% |

| Editor | Dec 2028 | 50% |

The gap between model and editor is largest here. The PDM model sees the engagement problem as genuinely hard and rates precursor density for sustained child engagement as low. The editor's view is that AI capability curves are steep enough to crack the engagement problem within two years of the Classroom Primer shipping, and that the homeschool market's self-selection bias (motivated families, motivated kids) makes the 6-month usage bar more achievable than it looks.

Methodology note: No relevant prediction markets exist for AI tutoring milestones. This is a genuine blind spot in the forecasting community. Expert signals are mixed: Sal Khan is publicly bullish, Stephenson himself is skeptical, Education Next questions Bloom's foundational data, and the Harvard RCT is the strongest positive signal to date.

The Uncomfortable Question

The three milestones above address feasibility. They don't address desirability.

The Primer works in the novel because Nell has nothing else. Her mother is absent, her neighborhood is dangerous, she has no school and no reliable adults. The Primer is a lifeline thrown to a child who would otherwise have none. In that context, an AI that becomes her primary educational relationship is unambiguously good.

But most children are not Nell. Most children have parents, teachers, classmates, friends. For those children, is "my AI tutor is my closest mentor" something to aspire to, or something to worry about?

Stephenson embedded the answer in the novel itself. The mass-produced Primers, distributed without Miranda's human voice, produce worse outcomes. The children who receive them form a collective identity but lack the individual growth that Nell achieves. The technology is the same. The human layer is the variable.

The question, then, is not only "can we build the Primer" but "should a child's primary educational relationship be with a machine?" If the Classroom Primer (Milestone 2) works, it preserves human relationships at the center of education while amplifying them with AI. The Homeschool Primer (Milestone 3) removes the human entirely. The gains might be real. The costs might be invisible for years.

This is not a question with a clean answer. It's a question that will get louder as the technology improves.

What to Watch

Six signals will indicate how fast these milestones are approaching:

Khanmigo longitudinal data. If Khan Academy publishes results from year-long deployments (not just single-session studies), the engagement question gets real data behind it.

Google LearnLM in schools. Google has the distribution to push AI tutoring into classrooms at scale. Watch for pilot program announcements and, more importantly, independent evaluations of those pilots.

Narrative-driven pedagogy startups. Any company attempting to teach through adaptive interactive fiction rather than Socratic Q&A is working on the Primer's core innovation. This space is currently empty.

Voice synthesis benchmarks. Track the gap between synthetic and human voice on measures of emotional connection and trust, particularly with children.

Engagement-duration RCTs. Most current studies measure learning gains from short interventions. The critical metric for the Primer prediction is whether AI tutoring sustains voluntary engagement over months, not hours.

Homeschool EdTech adoption. The homeschool community is the early adopter market for Milestone 3. Families already choosing to educate outside the classroom are the most likely first users of an autonomous AI tutor. Watch adoption rates and satisfaction data from this demographic.

PDM Score: 47/60 | Markets: No relevant prediction markets exist | Expert consensus: Mixed