The Wrong Metrics for the AI Economy

A Substack post wiped billions from the market this week. The scarier part is what's already happening underneath the headline numbers.

A Substack post wiped billions from the market this week. The scarier part: the numbers we use to measure the economy can't see the "Ghost GDP" already forming underneath.

On Saturday, a Substack newsletter called Citrini Research published a speculative scenario titled "The 2028 Global Intelligence Crisis." By Monday, software ETFs had fallen 4.8 percent. Visa, DoorDash, Uber, and Mastercard each dropped four to six percent. The S&P shed over one percent. Indian IT giants TCS, Infosys, and Wipro sold off in sympathy.

The piece racked up millions of views on X. Fortune, Bloomberg, The Guardian, and Reuters all covered it. CNBC TV18 ran panel discussions. A former sell-side analyst wrote that Citrini represented a bigger threat to Wall Street research than AI itself.

All of this from a blog post that its own authors described as "a scenario, not a prediction."

The Thought Experiment

The Citrini scenario, co-written by James Van Geelen and Alap Shah, imagines a memo from June 2028 looking back at how AI agents dismantled the US economy. The core thesis: the American economy is a "giant rent-extraction layer on top of human limitations." Companies have monetized friction. Things that take time. Things that require expertise. Things that people will overpay to avoid dealing with. AI agents remove that friction. The well-paid people working in the friction economy lose their jobs. They stop spending. Consumer demand collapses. GDP looks fine on paper because productivity is booming, but the money has stopped circulating through actual households.

They coined a term for this: Ghost GDP. Output that shows up in the national accounts but never reaches the real economy. A single GPU cluster in North Dakota generating the output previously attributed to 10,000 white-collar workers in Manhattan. Productivity statistics soaring. Real wages collapsing. "We probably could have figured this out sooner," the fictional memo reads, "if we just asked how much money machines spend on discretionary goods."

It is a compelling piece of writing. It is also, to borrow former sell-side analyst Rupak Ghose's assessment, "neither particularly original in its thesis nor does it provide any new data."

What made it move markets was the delivery.

When Substacks Move Markets

Consider the authors. Van Geelen, a former medic who started writing about markets three years ago, and Shah, who spent two years at Citadel before leaving to build AI companies, published a speculative fiction piece on a blogging platform. Within 48 hours it had triggered a global selloff.

Shah is also CEO of Littlebird, an AI agent company. If autonomous AI agents do disrupt the economy the way his scenario describes, his company stands to benefit. He has been making versions of this argument for 15 years.

Wall Street research notes with similar theses do not cause this kind of reaction. The difference is that sell-side analysts operate under compliance constraints that limit how provocative they can be, while independent newsletters can frame scenarios as dramatic narratives without the usual disclaimers and hedging. The piece read like science fiction. It built suspense. It was, Ghose noted, "hyperbolic, but the author covered himself by saying it was a scenario to flag tail risks."

The unconstrained, narratively compelling version of an idea now reaches millions through social media while the measured institutional version reaches thousands through Bloomberg terminals. That inversion of influence is its own story.

The Implementation Gap

The Citrini scenario assumes a smooth, rapid deployment of AI agents across the economy with zero friction. Every enterprise deploys seamlessly. Every agent works as promised. Every white-collar job falls like a domino.

Reality looks nothing like that. Gartner research projects that more than 40 percent of agentic AI projects will be canceled or fail to reach production by 2027, citing rising costs, unclear business value, and insufficient risk controls. A survey of enterprises found 46 percent cite integration with existing systems as their primary challenge. McKinsey reports that 88 percent of organizations use AI in some form, but only six percent qualify as "high performers" actually capturing significant value from it. JPMorgan, according to figures cited in eFinancialCareers, runs a weekly expense line of two billion dollars and has achieved less than ten percent improvement in coding productivity from AI, with annual savings of just 150 million dollars.

Deloitte published a report titled, without irony, "The Agentic Reality Check."

None of this means AI will not displace workers. Even Sam Altman acknowledged last week that "there's some real displacement by AI of different kinds of jobs." But he also noted that companies are engaging in "AI washing," blaming layoffs on AI that are really about cost-cutting, poor margins, or over-hiring. Challenger, Gray & Christmas tracked roughly 55,000 layoffs attributed to AI in 2025 alone. Meanwhile, a Yale Budget Lab study using Bureau of Labor Statistics data through November 2025 found no significant AI-related changes in occupational mix or unemployment length. An NBER survey of thousands of C-suite executives across four countries found nearly 90 percent said AI had no impact on workplace employment over the past three years.

These macro-level findings deserve serious weight, but they also have a structural blind spot. Unemployment is a lagging indicator: it measures people who lost jobs, not jobs that were never created. The NBER survey asks executives whether they cut headcount, not whether they quietly stopped approving new roles. The macro data is looking in the rearview mirror. The micro data, the collapse in entry-level postings, the frozen hiring pipelines, the rising underemployment among young graduates, is looking through the windshield. Both pictures can be true simultaneously: existing workers largely kept their jobs while the intake valve for new workers slowly closed.

The displacement is real but does not look the way people expect. It is not mass layoffs. It is not robots marching through office parks. It is quieter than that, and the metrics we rely on are not built to capture it.

The Jobs That Were Never Posted

The US unemployment rate sits at a historically normal level. Politicians cite it. Markets price it in. But the number measures people who are actively looking for work and cannot find it. It does not measure the jobs that were never created in the first place.

Indeed's Hiring Lab, drawing on the world's largest job posting dataset, describes the current labor market as "frozen." Their Job Postings Index began 2025 more than 10 percent above pre-pandemic norms but slid to barely above those norms by late October. Technology job postings specifically are a third lower than early 2020 levels. In January 2026, Indeed's data team wrote that "the job market doesn't have to break to be broken" and described a "low-hire, low-fire environment" that statistical models were not designed to flag.

In 2025, 245,953 people were laid off across 783 tech companies, according to TrueUp's layoff tracker (as of February 2026). In the first 51 days of 2026, another 40,132 have been cut, roughly 787 per day. Crunchbase counts at least 127,000 US tech workers laid off in mass cuts in 2025 alone. These are the visible losses. The invisible ones are the roles that were never posted, the headcount that was never approved, the position that quietly disappeared when the previous holder left. No announcement. No news coverage. No uptick in unemployment claims.

Then there are the ghost jobs. Roughly one in five job postings online in 2025 were ghosts, listings kept live with no intention of filling them. Some were filled months ago. Others were never budget-approved. One analysis put the figure at 30 percent. The problem was serious enough that Kentucky introduced legislation in January 2025 to ban the practice. Companies keep ghost listings live for brand visibility, pipeline building, or because nobody remembered to take them down. For job seekers, the practical effect is the same: the labor market appears healthier than it is.

The Vanishing Entry Level

The most concrete evidence of structural displacement is at the bottom of the career ladder. The Burning Glass Institute tracked entry-level job share in AI-exposed fields from 2018 to 2024. Software development roles requiring three years of experience or less dropped from 43 percent to 28 percent. Data analysis fell from 35 to 22 percent. Consulting from 41 to 26.

Total job postings in these fields stayed flat or increased. Senior hiring remained stable. Companies did not stop hiring. They stopped hiring juniors.

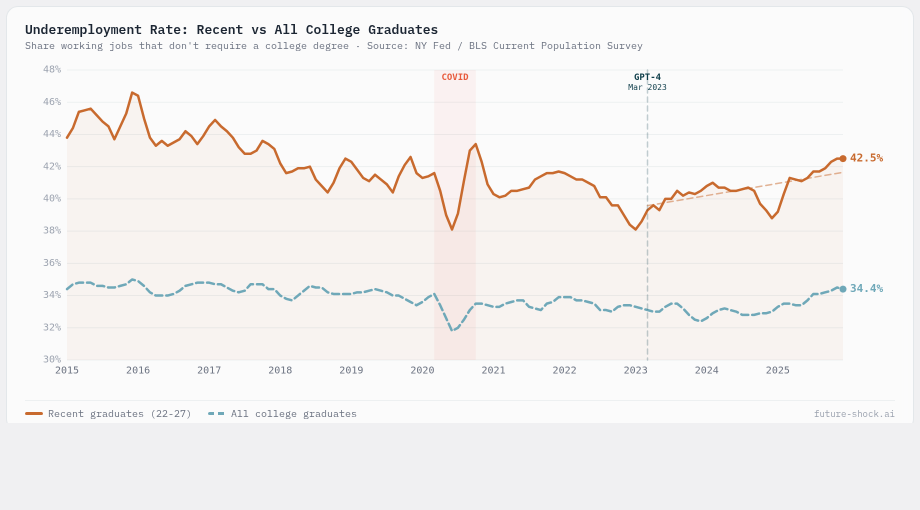

The Strada Institute and Burning Glass found that 52 percent of the class of 2023 were underemployed one year after graduation. The New York Fed's college labor market tracker shows this is not new, but it is getting worse: underemployment among recent graduates has hovered between 40 and 50 percent for over a decade, but the composition is shifting. Graduates are increasingly stuck not just in non-college jobs, but in lower-quality non-college jobs. FRED data shows unemployment among 20-to-24-year-olds with bachelor's degrees has risen from 5.2 to 6.2 percent. Young people with bachelor's degrees now face higher unemployment than those with associate degrees. That inversion has never happened before.

This trend has a longer tail than AI alone. US labor share of national income fell from 66 percent in 1979 to 58 percent in 2022, a decades-long shift that AI is now accelerating. The money flowing to workers as a share of total output has been declining for 40 years; AI tools that eliminate entry-level roles are the latest chapter, not the first.

AI tools handle the foundational tasks that once trained early-career workers: drafting, research, basic analysis, forecasting, documentation. IDC surveys report that 66 percent of enterprises are reducing entry-level hiring due to AI. Employers flatten organizational structures and hire mid-career professionals who need no training. The World Economic Forum's 2025 Future of Jobs Report found that 40 percent of employers expect to reduce staff as AI automates tasks.

This breaks what economists call the "vacancy chain." In a healthy labor market, a senior leaves, a mid-level moves up, and a junior is hired. AI automates the bottom link. The pathway for new entrants is severed.

The Fed Cannot Fix This

On the same Monday that markets were reacting to the Citrini report, Federal Reserve Governor Lisa Cook delivered a speech that received far less attention but may prove more consequential. Her remarks were striking for their bluntness.

"Job displacement may precede job creation," she told the audience, "such that the unemployment rate may rise and participation in the labor force may decline as the economy transitions."

Then the key line: "Our normal demand-side monetary policy may not be able to ameliorate an AI-caused unemployment spell without also increasing inflationary pressure."

The Fed's tools do not work on this problem. Rate cuts stimulate demand, which helps when businesses want to hire but cannot afford to. AI displacement is different. The businesses can afford to hire. They have decided they do not need to.

Cook explicitly called for education, workforce, and non-monetary policies. She was, in the cautious language of a sitting Fed governor, saying this is Congress's problem, not ours.

The Atlantic published a complementary analysis the same week. Americans with bachelor's degrees now account for a quarter of the unemployed, a record. White-collar job losses from a structural shift would be qualitatively different from recession-era unemployment. The unemployment insurance system, designed for six-month gaps with payments capping at 500 to 600 dollars a week, is not built for six-figure earners who may be permanently displaced from their profession.

Measuring the Wrong Things

GDP was designed in 1937 to measure industrial output during the Depression. The unemployment rate, as currently defined by the Bureau of Labor Statistics, counts people who looked for work in the past four weeks. Neither metric was built to detect an economy where output rises, hiring freezes, and an entire generation quietly loses access to career-track employment.

If Ghost GDP is the disease, our economic instruments are the thermometer that cannot read below a certain temperature. The number looks normal. The patient does not.

A more honest dashboard would track the signals that are already visible in scattered datasets but never appear together in a headline number:

Underemployment rate by age cohort. The New York Fed already publishes this. Breaking it out by age reveals where the pipeline is breaking, showing what the unemployment rate hides: people who have jobs but not the jobs their credentials were supposed to unlock.

Entry-level job share. Down by a third in AI-exposed fields since 2018, according to the Burning Glass Institute. This single number captures the vacancy chain problem better than any aggregate employment figure.

Job posting-to-hire ratio. Indeed publishes posting volume. The ratio of postings to actual hires would reveal how much of the visible labor market is performative: listings stay up, nobody gets hired.

Ghost job prevalence. Current estimates range from 20 to 30 percent phantom listings. Tracking this systematically would adjust the denominator that makes the labor market look healthier than it is. Kentucky has already introduced legislation to ban the practice.

Wage growth by experience tier. National wage growth numbers can mask a split where senior compensation rises while entry-level pay stagnates. Tier-level breakdowns would expose whether gains are concentrating at the top.

For readers interested in going further, David Shapiro's Post-Labor Economics framework proposes novel indices specifically designed to track a transition where labor itself becomes a shrinking share of economic activity.

None of these metrics require new data collection. The underlying numbers already exist across the Fed, BLS, Indeed, Burning Glass, and independent researchers. They are just not assembled into a single picture, and no headline number synthesizes them. The result is a measurement gap: the official indicators say the economy is fine while the people inside it can see that something has changed.

What Comes After

If these trends deepen, the standard policy responses, from universal basic income to negative income taxes to public capital funds, are no longer confined to academic thought experiments. They are showing up in serious modeling work. AI-driven productivity gains may produce collapsing prices for goods and services that once required expensive human labor. But that same productivity removes the wage income that sustains consumer demand.

None of this is settled or inevitable. The Citrini scenario may read as prophecy five years from now or as an artifact of a brief panic. But the measurement problem is real regardless of which future arrives. We are running a 1937 dashboard on a 2026 economy, and the gap between what the instruments show and what people experience is widening every quarter. The first step is not a policy fix. It is building the gauges that let us see clearly what is already happening.