Compute Wants Out

AI infrastructure is starting to split in two directions at once: open public compute for science, and distributed compute pushed toward the walls of new houses.

A white box on a suburban wall and a federally funded Blackwell cluster do not look like the same story, yet they are both answers to the same problem: AI compute is running out of obvious places to live.

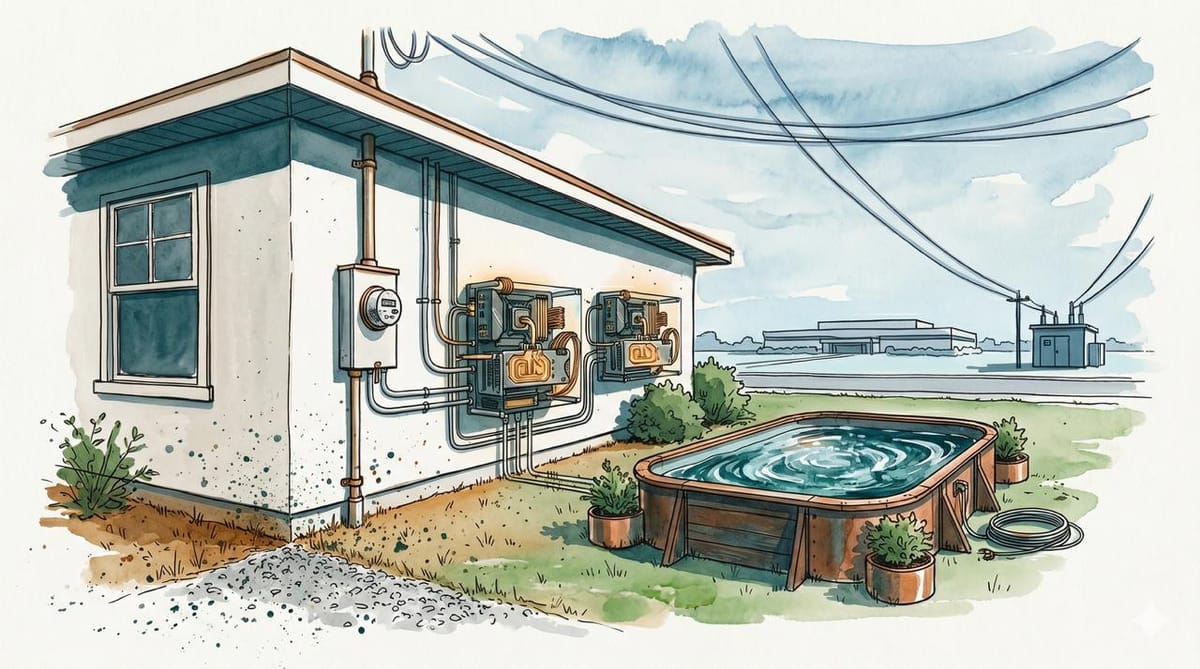

A data center used to look like a warehouse, a tilt-up concrete shed beside a substation, a converted aluminum smelter on cheap hydro, or a campus the size of a regional airport. SPAN's version looks more like a white utility box bolted to the side of a new house.

That box, which SPAN calls XFRA, is meant to hold liquid-cooled NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs and run inference for hyperscalers off unused electrical headroom managed through a smart service panel. SPAN says initial deployments are planned for later this year, with homebuilders including PulteGroup involved in the rollout.

A few weeks earlier, on the other end of the country and the other end of the imagination, the Allen Institute for AI announced that NSF OMAI was coming online. The project pairs $152 million from the National Science Foundation and NVIDIA with an NVIDIA B300 Blackwell Ultra cluster managed by Cirrascale. "A national investment in open infrastructure," Ai2's Noah Smith said, "that has turned into real, usable compute."

Both stories turn on the same physical object, NVIDIA Blackwell silicon humming inside a metal box. They point to wildly different ideas of where AI compute is supposed to live.

The Box on the Wall

SPAN, a Bay Area company best known for its smart electrical panels, announced XFRA in April. The company argues that the U.S. grid cannot keep up with AI demand because interconnection queues are years long, and "the grid infrastructure needed to support this scale can take over a decade to build." SPAN's CEO Arch Rao calls this the "speed-to-power gap." His proposed bridge across it is to stop trying to build new substations first and start using the spare capacity already wired into millions of homes.

The setup combines a SPAN smart panel, a battery, sometimes solar, and a compute node containing enterprise-grade Blackwell GPUs. SPAN says hosts receive a premium SPAN Panel, battery backup, optional solar, and "fixed, discounted rates for electricity and internet" in exchange for making space and power available.

The first deployments are planned with homebuilders including PulteGroup, which is the right kind of partner to ask if you want this to be real. Pulte is one of the country’s largest homebuilders. New construction is the only place where you can specify the panel, the slab, and the internet drop in advance, instead of retrofitting around someone's HVAC condenser. SPAN says it wants gigawatt-scale annual capacity by 2027.

Read aloud, the proposal sounds faintly ridiculous. A major homebuilder, an electrical-panel startup, and NVIDIA would like to put mini data centers on the side of new houses. On the page, it is one of the more coherent residential-energy products anyone has pitched in years.

The skepticism writes itself, though. Heat dissipation on the side of a house in August is not the same engineering problem as cooling a hyperscaler hall. Residential internet uplinks are not designed for sustained inference traffic. A box built around high-end NVIDIA GPUs is going to attract the attention of insurance carriers and, eventually, thieves. The "underutilized capacity" SPAN points to has competition from heat pumps, EV chargers, induction stoves, and whatever electrification policy does over the next decade. None of these are necessarily fatal. They are the questions pilots are supposed to answer, and what counts as a successful answer has not been publicly defined.

The Open Cluster

On May 7, Ai2 said the Open Multimodal AI Infrastructure for Science (OMAI) cluster was becoming operational. The hardware is NVIDIA B300 Blackwell Ultra systems, deployed and managed in partnership with Cirrascale. The funding is $152M from NSF and NVIDIA. The cluster supports training and evaluation across language, multimodal, scientific, and agentic systems.

OMAI matters because of the institution around the hardware. Ai2 says the project is designed around open release of models, tools, and research artifacts, including weights, training data, methods, checkpoints, and final models. NSF's Wendy Nilsen frames it as letting scientists "build, test, reproduce, validate, and advance AI systems."

Most frontier-scale training runs today are reproducible in roughly the way a celebrity chef's recipe is reproducible. The ingredients are listed, the oven temperature is approximate, and the result depends on someone's private kitchen. OMAI's bet is that public research compute needs to exist as a category, distinct from cloud rentals on a quarterly contract.

The bet is small relative to the hundreds of billions flowing into private AI infrastructure this year. It is still a rare public attempt to give academic AI access to frontier-class compute with open-governance expectations baked into the grant.

Same Wall, Different Exits

The two stories rhyme because everyone in AI is hitting the same wall at the same time. Anthropic's new SpaceX compute deal, which gives it access to the capacity of the Colossus 1 data center in Memphis, is the blunt version of the same pressure. Labs are no longer shopping only for chips. They are shopping for powered buildings, grid access, cooling, and months shaved off the time between signing a contract and running a model.

Power, substation queues, interconnection approvals, and time-to-rack now sit beside chips as hard limits. Ai2's answer is to carve out an open commons big enough to do real science in. SPAN's answer is to skip the substation entirely and route demand into the residential grid, one panel at a time. The two answers do not contradict each other. They imply different theories of who AI infrastructure is for.

Tongs Required

Both stories deserve tongs. SPAN's announcement is, for now, a press release with a very good rendering and a pilot that has not happened yet. The gigawatt figure is a 2027 ambition, not a deployment. The obvious question is whether spare residential capacity remains spare once heat pumps, EV chargers, induction stoves, batteries, and local utility rules enter the picture.

OMAI is real compute, but "open" and "available" are different words. NSF allocations are governed and finite. A public cluster that exists is still a public cluster a researcher has to apply to use. The test is whether the queue produces work that private clusters cannot, including reproducible runs, contested methods rerun by third parties, and science that does not require a corporate NDA to read.

The Bright Signal

For most of the last three years, the dominant design for an AI training facility has been a hyperscaler-scale site, a major tenant, and a long power contract. That pattern was never going to be the only one. It was the first one that fit the financing.

OMAI treats compute as a scientific instrument. XFRA treats compute as an infrastructure appliance. Both are still NVIDIA chips. The same silicon, placed in different institutional containers, may produce different research, different access, and different ownership over who gets to use AI for what.

Under public-science governance, B300s become an argument for AI infrastructure that does not all have to look like one company's warehouse. On suburban walls, Blackwell-class GPUs could become another route back to the same three hyperscaler tenants, with only the address on the meter changed.

Direction is not just how many chips get installed. Direction is who owns the box.

A data center used to look like a warehouse. This year it started to look like a federal grant, a panel on a wall, and a serial number on a server bolted outside a house. None of that means more compute, exactly. It means more places where compute is allowed to be.