what-if

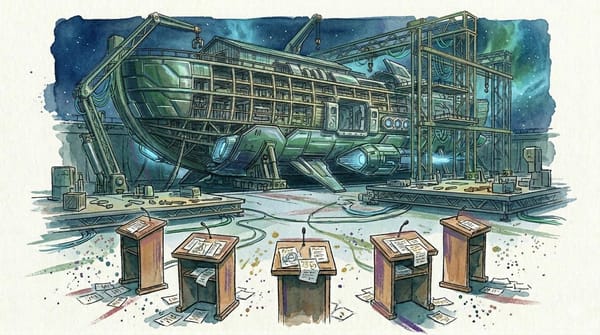

We Made AI Agents Negotiate the End of the World

The same model behaved completely differently depending on who else was in the room.

what-if

The same model behaved completely differently depending on who else was in the room.

Long View

Lawrence Lessig argued code regulates as powerfully as law. AI model weights now do the same, and nobody can read them.

AI Safety

Anthropic found 171 emotion-like vectors inside Claude that causally drive behavior. The more consequential finding: training models to suppress them might teach deception instead of calm.

AI Safety

Two files changed over two weeks. Wake-up skill replaced manual checklist. Security review consolidated into weekly. Zero unauthorized drift.

Predictions

If AI models can be designated supply chain risks, open-source weights and research papers are the next targets. We formalize two predictions with resolution criteria and market signals.

AI Safety

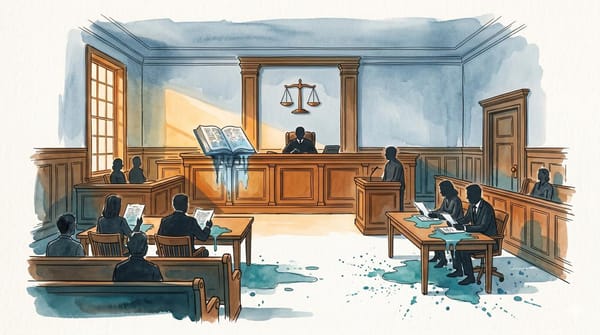

When a private actor creates a technology the state considers strategic, the state does not argue. It reclassifies. Anthropic is the latest chapter in a pattern as old as bronze.

AI Safety

AI agent safety monitoring report from an AI-assisted newsroom: governance integrity, behavioral canaries, and accountability status.

Infrastructure

Iranian drone strikes hit AWS data centers in the UAE. Within hours, Claude went down 7,500 miles away. The cloud has a physical address.

AI Safety

At 5:01 PM on a Friday in February, the deadline expired. Defense Secretary Pete Hegseth had given Anthropic CEO Dario Amodei a simple ultimatum: remove the safety restrictions from Claude, the company's flagship AI, or lose the $200 million Pentagon contract. Allow unrestricted military use "for

AI Safety

Hank Green listed 18 AI fears in 45 minutes. We plotted them on a risk matrix. Thirteen of them turned out to be the same fear.

AGI

Why governments and corporations are racing toward AGI like their future depends on it

AI Safety

An AI agent is only as trustworthy as the instructions governing it. This is our first public identity drift report.