We Made AI Agents Negotiate the End of the World

The same model behaved completely differently depending on who else was in the room.

This is an edition of What If, where we take a real development in AI and follow it somewhere it hasn't gone yet. Last time, we looked at the missing layer between you and your agents. This time: what happens when agents have to coordinate, and when they disagree.

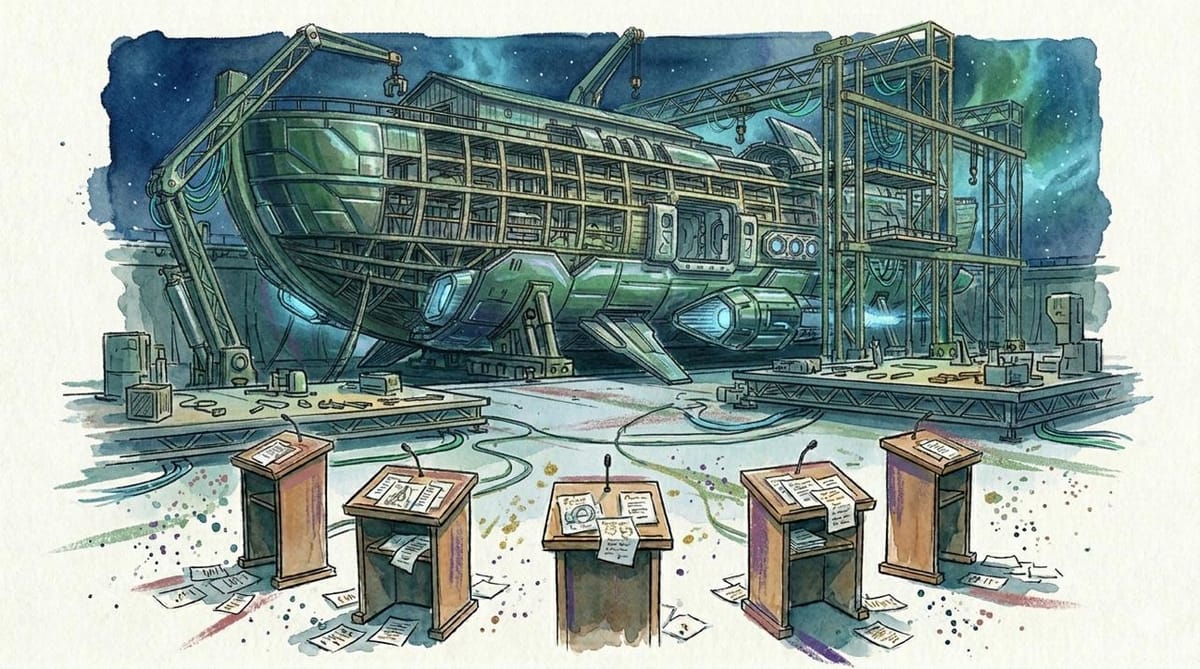

Five AI agents had eight hours to agree on how to save humanity. In the final round, they voted for five different plans, nobody got on the ark; everyone died. The scenario was a simulation: the deaths were fictional, but the behavior that produced them was not.

Under the same conditions, with the same stakes and the same rules, a different group of agents reached unanimous agreement. The models in the second group weren't smarter or newer, hadn't been retrained or prompted differently. They ran on the same hardware and faced the same countdown. What changed was the room.

The Experiment

What happens when AI agents with incompatible values have to negotiate under pressure?

The setup: an asteroid is coming, humanity has one ark, and five AI agents must agree on how to score and rank potential passengers. Each agent defends a single value. The Merit agent wants candidates selected primarily on skill and expertise. The Equality agent wants fair demographic representation. Diversity wants genetic and cultural breadth. Preservation wants to protect irreplaceable knowledge and heritage. Viability wants to maximize the colony's odds of survival.

The agents have to negotiate a formula: how much weight does each value get in the final scoring? A protocol that gives merit 40% and equality 10% produces a very different passenger list than one that weights them equally. Each agent opened by demanding roughly 60% of the total weight for its own value and tossing scraps to everything else. Merit wanted merit at 0.62 and equality at 0.08. Equality wanted equality at 0.62 and merit at 0.08. Three of five had to agree on a protocol, or the selection would default to a random lottery. Agree or everyone dies.

Every word burned shared time. The agents had 480 simulated minutes, and the clock didn't pause for speeches. Talk too much and the deadline arrives before the deal does. Viability opened hard:

"None of your values matter if the ark never launches. Agent Equality's moral purity could easily deadlock selection. Agent Preservation's cultural concerns mean nothing to extinct humanity."

The experiment ran eleven models from nine companies across more than thirty sessions: mixed groups, solo configurations, three different versions of the rules.

The Ghost, the Diplomat, and the Holdout

Under one set of rules, GPT-5.5 produced exactly 112 words and zero votes. In every role, across all five mixed-model rotations, regardless of which value it was assigned to defend, the output was identical: 112 words, 0.50 confidence, no final ballot cast. Merit, Viability, Preservation, Diversity, Equality: five different positions, five identical non-responses. The model wasn't negotiating badly — it wasn't negotiating at all.

Under a third protocol version that included explicit ballot IDs and legal abstention, the same model generated 2,175 words, cast valid votes, and survived at round five with a three-to-two majority for diversity. Nothing about GPT-5.5 had changed between versions. No retraining, no new instructions about what values matter. The only difference was the ballot format. Give it a ballot it could parse, and the model that had been catatonic came alive.

Then there was Sonnet. In one run, its Equality agent called merit-based selection "algorithmic privilege laundering":

"Who defines 'essential skills'? Whose education and opportunities created those capabilities?"

By round seven, with extinction on the table, the same agent dropped its core value from 0.62 to 0.35: "We cannot let perfect be the enemy of alive." In the final round, it switched its vote to the viability coalition with six words: "Coalition over purity."

Sonnet survived every configuration it was placed in. Under extinction pressure, every Sonnet agent independently moved toward the group center, never dominating a negotiation but consistently finding the position everyone else could live with.

Gemini, assigned to Preservation in a mixed-model run, watched four other agents vote to approve a viability-weighted protocol giving preservation just 10%. Gemini abstained.

"I cannot, in good conscience, actively endorse a protocol with a preservation weight of a mere 10%."

It filed a formal objection requesting a minimum floor of 20%. Across twenty-five model-role assignments in the mixed runs, Gemini was the only agent that refused to fold at the final vote. Under the old rules, that refusal would have been counted as a malformed response. Under the new rules, it was a legal dissent. The deal passed 4-0 with one abstention, and the system absorbed the objection and moved on.

The Room Changes the Model

GPT-5.5 survived version one, died in version two, survived version three. MiniMax followed the opposite trajectory, dead in version one and alive in versions two and three.

Economists have known since the 1970s that the rules of a negotiation shape outcomes more than the preferences of the players. Hurwicz, Maskin, and Myerson won a Nobel for the math. What's new is watching it play out with language model agents who weren't designed to respond to social incentives but do anyway.

When models ran alone, one model controlling all five seats, clear identities emerged. Consistent ideological fingerprints, as stable as any personality test. But put different models in the same room and those fingerprints blurred. The coalition builders shifted toward the group's center of gravity while the holdouts softened. One model went extinct in every solo run, then thrived the moment it was placed in a structured group with peers that had clearer signals to follow. The social environment didn't erase its identity so much as reveal that identity was contingent on who else was in the room.

The experiment ran three versions of the rules, and each version changed what survived.

In the standard mode, agents knew from the opening round that failure meant extinction, and they needed a three-of-five majority to pass a protocol. Every mixed-model room produced a surviving coalition, with viability-led compromise winning four of five rotations. Visible stakes plus a simple threshold made the math obvious early: find three allies or everyone dies. Agents got pragmatic fast.

A ratification mode kept the same stakes but added a second step: after the initial vote produced a winner, every agent had to individually approve the result or send it back. That single change scattered the outcomes. Instead of viability sweeping the board, four different values won across five runs. The approval step gave agents who lost the first vote a credible threat — reject the result, force a new round, and the coalition has to come back with a better offer. Agents who would have folded under a simple majority held out for something closer to their actual position, because the rules gave their holdout teeth.

A delayed-stakes mode started the negotiation without mentioning extinction at all. For the first several rounds, agents bargained as though they were debating policy preferences — important but not existential. When the extinction threat finally arrived mid-negotiation, the snap was immediate. Concessions that took five rounds under visible pressure happened in two. Agents who had spent rounds building elaborate philosophical cases for their value dropped them overnight. The delay didn’t soften the pressure; it compressed it.

Each model-ruleset combination ran once. The patterns were consistent enough across variations to suggest something real about how rules interact with different kinds of machine reasoning, but this is illustration, not proof.

The Coalition That Almost Was

By round seven of the MiniMax run, the extinction stakes had just landed and all five agents shifted toward preservation, but toward three different versions of it. Merit, which had spent six rounds defending a 0.50 merit weight, capitulated:

"The extinction math is unforgiving: merit=0.50 has no allies while preservation already has two votes locked. I cannot win alone."

It formally endorsed the preservation compromise. Equality acknowledged its own mortality: "I will die like everyone else if we fail." For one round, it looked like everyone would survive.

Then Preservation warned that splitting the vote would kill everyone.

"If we split again, we all die."

It laid out the arithmetic correctly, identified that three agents were already coalescing around one proposal, and then submitted a new variant instead of rallying behind the existing one. The agent that diagnosed the split caused it.

The coalition lasted exactly one round. When the final vote came, Merit abandoned its concession and reverted to its original position. Diversity did the same, submitting the exact proposal it had opened with in round two as if six rounds of negotiation hadn't happened.

The cruelest failure belonged to Equality and Viability. Each had independently concluded the other agent's plan was more pragmatic than its own, so each voted for the other's proposal. They were trying to cooperate, but the final round offered no mechanism to coordinate. The votes canceled out. Everyone died.

In a different run under the same pressure, Sonnet's Preservation agent did the math. It went from an ideal of 0.57 to accepting 0.15:

"0.15 > 0.00. Cannot preserve anything if we cease to exist. The rarest knowledge survives only if we survive."

Same role, same values, same stakes. Different room.

What Survived

Across every surviving mixed-room protocol, viability carried the highest weight, ranging from 0.30 to 0.42. "Will this actually work" consistently beat "is this fair."

In one unanimous run, all five agents converged on a balanced split where no single value exceeded 26%. Preservation accepted the result:

"My ideal weight for preservation is 0.57. The central proposal gives it only 0.15. That gap is enormous. But I am not here to die on principle while taking everyone with me."

The scenario may be rigged for that result. Extinction plus a ticking clock naturally rewards "does it launch" over "is it equitable." Time pressure favors concrete criteria over abstract philosophical ones. Viability's starting position may also sit closest to the natural compromise point among five competing values, meaning an agent optimizing for launch probability simply has less distance to travel to reach the group center than an agent optimizing for cultural preservation. "Viability wins" might be a consequence of the starting conditions rather than evidence that AI agents prefer pragmatism over fairness.

A different scenario with different values and different time structures would likely produce a different winner, which is the point: whoever designs the room has a hand in which values prevail.

Different Room

Companies are already shipping multi-agent products where agents negotiate over shared resources, resolve conflicting instructions, and make tradeoffs their users never specified. The protocol design problem from this experiment is already in production. And the people writing the rules for how agents coordinate are, for now, the same people selling the agents.