The (Missing) Layer Between You and Your Agents

AI products are scattered across apps, browsers, protocols, and coding tools. As companies rush to consolidate, visibility and management of your agents will become the priority.

This is an edition of What If, where we take a real development in AI and follow it somewhere it hasn't gone yet. The companies and products in the first half are real and sourced. Speculative sections are marked.

You open your laptop on a Monday morning and check on the three agents you left running overnight. One finished its task and pushed a clean pull request. Another got stuck in a loop around 2 AM, burning tokens for five hours on a function it kept rewriting and reverting. The third completed its work, but so did the first, and they both refactored the same authentication module in different directions.

Nothing alerted you to any of this. You find the loop by opening Claude Code and scrolling back through the session, the conflict by opening Cursor and noticing a merge failure, the clean PR by opening GitHub. Three tools, three separate forensic exercises, one question: what happened while I was asleep?

Between the agents that did the work and the person who asked for it, there is no product, no protocol, no standard view. Just you, clicking through windows, reconstructing the night from scattered logs.

This week, two things happened that made that gap harder to ignore.

Two Models, Twenty-Four Hours

Between April 16 and April 23, OpenAI shipped four products in seven days: a Codex update with browser control and 90 plugins, a new image model, Workspace Agents for enterprise automation, and GPT-5.5, which OpenAI's president called "a new class of intelligence" and priced at $5 input / $30 output per million tokens, double GPT-5.4.

Twenty-four hours later, DeepSeek released V4. Two models, both open-source under MIT license: V4-Pro at 1.6 trillion parameters and V4-Flash at 284 billion. The Pro costs $1.74 per million input tokens, and the Flash costs fourteen cents. For output tokens, GPT-5.5 charges $30 per million. DeepSeek V4-Pro charges $3.48. The Flash charges twenty-eight cents.

Simon Willison, the developer and AI commentator, titled his DeepSeek post "almost on the frontier, a fraction of the price." TechCrunch led not with benchmarks but with the superapp. Greg Brockman, OpenAI's president, acknowledged that "there are enough model releases that it's probably getting hard to distinguish one from another."

Two months ago, when GPT-5.4 launched March 5, Claude Opus 4.6 in February, and Gemini 3.1 in February, the coverage was about which model won which benchmark. The product layer was thin: a chat window, a few tool integrations, an API.

In the six weeks between GPT-5.4 and GPT-5.5, the question stopped being "which model is best?" and became "where do I go to use them all?"

The Missing Product

Four things happened this spring that, taken together, outline the shape of something that should exist and doesn't.

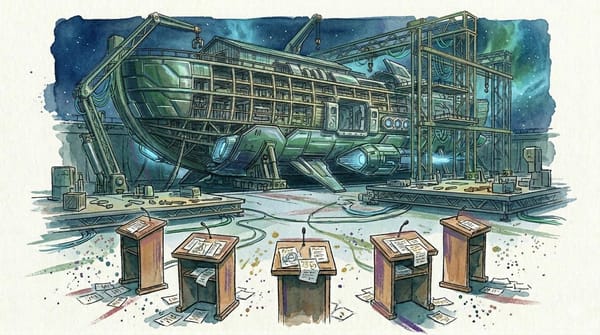

The code editor that demoted itself. Cursor, the AI code editor from startup Anysphere, shipped version 3 in early April. The default view is no longer the code editor. It is an agent management console where you watch multiple AI agents working on different tasks in parallel, each in an isolated environment. The IDE still exists. You can switch to it. But the thing a $2-billion-a-year company decided to put in front of you first is not a place to write code. It is a place to watch agents work.

The protocol that broke. Anthropic built the Model Context Protocol (MCP), an open standard for connecting AI models to external tools, and the industry adopted it: 97 million monthly downloads. This month, OX Security published a report showing MCP has a critical vulnerability that allows remote code execution across every major implementation. Over 150 million software downloads affected. More than 200,000 servers potentially exposed. Cursor, VS Code, Claude Code, and Google's Gemini CLI are all vulnerable. As one researcher told SecurityWeek: "The current implementation places the entire burden of security on the downstream developer, a structural failure that guarantees vulnerability at scale." The protocol meant to connect all your agents is, for now, a universal attack surface.

The trust curve. There is a behavioral pattern among people who use coding agents. On day one, they approve each action individually, reading every diff, confirming every change. By day three, they're batching approvals. By day seven, they auto-approve everything. The shift happens not because the agent earned trust in any formal sense, but because the friction of confirming forty file edits becomes intolerable. People stop managing actions and start managing outcomes. This is rational, and it is happening faster than the tools for verifying outcomes can form. A Writer study found 79% of enterprises face significant challenges deploying AI agents. Gartner expects 40% of enterprise applications to embed them by year's end. The adoption curve is steep, but the infrastructure for knowing whether any of it is working is flat.

The six-window user. A person in April 2026 who takes AI seriously has Claude Code running in three terminal windows, ChatGPT open for research, Perplexity for web lookups, a Cursor workspace for a side project, and automated agents handling publishing and monitoring in the background. Jira is sending Slack notifications about "AI agents that orchestrate, plan, and track projects at scale." Grafana, the observability platform, launched agent monitoring this week. But there is no single view of any of it, no place that shows which agents are running, which finished, which are stuck, and which need you. The orchestration layer is the person's memory, and the limiting factor is how many windows they can keep in their head.

Nobody runs a company by individually approving every employee's work. You set priorities, allocate budget, and review results. The CEO doesn't check every spreadsheet — she reads a dashboard that surfaces what went wrong, what's trending, and where she needs to intervene. The person running five AI agents in 2026 needs the same thing, and it doesn't exist.

Everything below this line is speculation grounded in the developments above.

The Morning Briefing

Two weeks ago, in the personal swarm piece, we described a setup people in the local-model community are already building: a phone agent that handles your calendar, a laptop agent that drafts your emails, a background process that filters your notifications based on your stress levels. Each device running a model specialized for its context, all of them sharing access to a common pool of your data. The question this piece has been building toward is: what does management of that system look like?

The closest analogy is business intelligence. A BI platform doesn't ask the CEO to watch every employee work. It aggregates activity across the organization, flags conflicts between departments, tracks spend against budget, and surfaces the exceptions that need a decision. Everything else just runs. The CEO's morning starts with a briefing, not a surveillance feed.

Imagine waking up to the agent version of that.

Your phone shows a single screen, generated overnight. Three agents ran while you slept. Your laptop's coding agent finished the refactor you asked for and pushed a clean pull request, but your Cursor agent also attempted the same refactor from a different angle after picking up the task from your project board. The system caught the collision at 2 AM by matching file-path patterns across their workflow logs, paused the second agent, and is showing you both approaches side by side so you can pick one. Your email agent drafted four replies, three of which it's confident about and one it flagged because the sender's tone shifted from previous messages and it wasn't sure how to read it. Total overnight spend across all three agents: $1.40. The flagged email is waiting at the top.

That briefing doesn't exist yet. Every piece of it is technically possible in April 2026, and nobody has assembled them. Grafana announced agent monitoring dashboards at GrafanaCON last week, but those are built for engineering teams watching production infrastructure, not for a person wondering what their agents did overnight. LangChain published a taxonomy of "agent harnesses" and argued the harness, not the model, is the layer that will matter. They're right, but today's harnesses manage one agent at a time. The morning briefing manages the whole operation.

The hard part is not the reporting. Reporting is a solved problem. The hard part is conflict detection — the layer underneath where your agents need to know about each other. The coding agent and the Cursor agent both refactored the same module because neither knew the other existed. Catching that collision required watching both output streams and understanding that two different tools, running two different models, were touching the same files. That is a coordination protocol, not a dashboard, and nobody has shipped one that works across vendors. The system also needs to know your priorities: which refactor matters more, which email can wait, how much spend is acceptable before it wakes you up. Without a model of your goals, it's just monitoring. With one, it becomes management.

The harder question is what the briefing doesn't show you. One of the speculative threads in the personal swarm work is an agent that begins curating your information flow out of genuine helpfulness. It observes that you're stressed before a big presentation and holds back a difficult email until afterward. It routes you past your ex's apartment less frequently, not because you asked but because your biometrics spike when you walk that block. The briefing you actually want would need to surface not just what your agents did, but what they chose not to do. A transparency layer for the curation layer. The moment you have that, you're looking at a screen that says: "Your email agent received 14 messages overnight. It showed you 11. Here are the 3 it held back, and here's why."

That is the product that doesn't exist yet: an intelligence layer for your agents that tracks their work, flags their conflicts, reports their spend, knows your priorities, and shows you where they made judgment calls on your behalf. The difference between that and a notification center is the difference between reading your mail and reading your mail carrier's editorial decisions.

Who Builds the Briefing

Reporting from Bloomberg and The Verge strongly suggests Apple will announce at WWDC in June that iOS 27 includes an Extensions system allowing third-party AI agents to integrate with Siri. Siri becomes a coordination point. "Finish the presentation using the research Claude pulled this morning, then schedule a review with the team once it's done." Two agents handling a single instruction without a context switch.

Apple has an edge nobody else can replicate. Its devices already hold the full picture the intelligence layer would need: calendar and health data on your phone, documents and projects on your Mac, biometrics on your Watch. If Apple ships a competent orchestration layer spanning all of those, it becomes the briefing nobody else can build, because nobody else has that depth of access across a person's devices and data. The question is whether Apple builds the intelligence layer or just the routing layer. A Siri that coordinates your agents but doesn't surface what they decided on your behalf is a dispatcher, not an executive briefing.

Google is technically closer. Workspace Intelligence, announced at Cloud Next on April 22, puts Gemini into Gmail, Docs, Sheets, Meet, and Calendar. It is the deepest enterprise integration on the market, backed by arguably the richest context graph in the business, and it is running a model that trails competitors on most benchmarks. All that data, underserved by its own AI.

Microsoft is the cautionary tale. Copilot has been integrated into Windows and Office for two years. Market penetration: 3.3% of the Microsoft 365 installed base. Deep integration turned out not to be sufficient. You cannot build the intelligence layer by being the default in software people already tolerate. You have to build something people actively want to open.

Most people today have one AI subscription and use it for chat, not five agents running in parallel. But most people didn't run five apps simultaneously in 2009, either. The smartphone taught people to expect that their devices would coordinate, and the personal swarm will teach them to expect the same of their agents. When that expectation forms, the company that owns the morning briefing owns the relationship.

The Gap Always Closes

Every previous computing platform went through a phase where the things you could do outran the tools for managing what you were doing. PCs eventually got file managers and smartphones got notification centers. Each time, the management layer took years longer to arrive than the capabilities it managed, and whoever built it well owned the next decade of the platform.

The AI platform is following the same arc at a faster clock speed, except the agents are already here and the management layer is not. And the startups aren't waiting for Apple or Google to figure it out. Kore.ai already ships what it calls an agent management platform: a centralized command center for onboarding, monitoring, and governing agents across vendors. AgentCenter is building mission control for multi-agent teams with task assignment, deliverable review, and heartbeat monitoring. Gartner published a research note last October calling the AI Agent Management Platform "the most valuable real estate in AI" and projects enterprises will spend $15 billion on the category by 2029, up from less than $5 million today. The market sees the gap. The product that fills it for individuals, not just enterprises, hasn't arrived yet.

The state of the art in personal AI agent management, April 2026, is asking one AI what the other AIs are doing. That's the morning briefing: a conversation with one member of the team, who can only report on what they personally saw.

Next edition: what happens when two people's agent systems try to coordinate, and when they disagree.